How to Answer System Design Questions: A Step-by-Step Interview-Safe Method

You understand distributed systems. You know caching strategies, database sharding, load balancing, and microservices architectures. Yet when the interviewer asks, “Design a URL shortener,” you freeze. Not because you lack knowledge, but because you don’t know how to answer system design questions in a structured, interview-safe way that consistently scores points.

This guide solves that execution problem. You’ll learn the exact step-by-step method that transforms technical knowledge into clear, structured interview answers that interviewers reward.

Last updated: Feb. 2026

Table of Contents

- 1. The Execution Problem Most Candidates Face

- 2. Step 1: Clarify Requirements Before Designing Anything

- 3. Step 2: Define Scope and Assumptions Out Loud

- 4. Step 3: Present a High-Level Design First

- 5. Turning Knowledge Into Interview Performance

- 6. FAQs

The Execution Problem Most Candidates Face

Over the past decade coaching senior developers through system design interviews , I’ve identified a consistent pattern. Most failures aren’t knowledge failures.

Engineers who fail system design interviews typically understand distributed systems deeply. They’ve built production systems handling millions of requests. They know CAP theorem, eventual consistency, and database sharding.

Yet they leave interviews hearing feedback like “good ideas, but unstructured,” “jumped around too much,” or “missed key considerations.” The interviewer wasn’t questioning their technical knowledge. They were scoring execution.

Why Knowledge Doesn’t Equal Interview Success

System design interviews test communication and structured thinking, not just technical expertise. You’re being evaluated on how you organize your thoughts, prioritize considerations, and communicate trade-offs under time pressure.

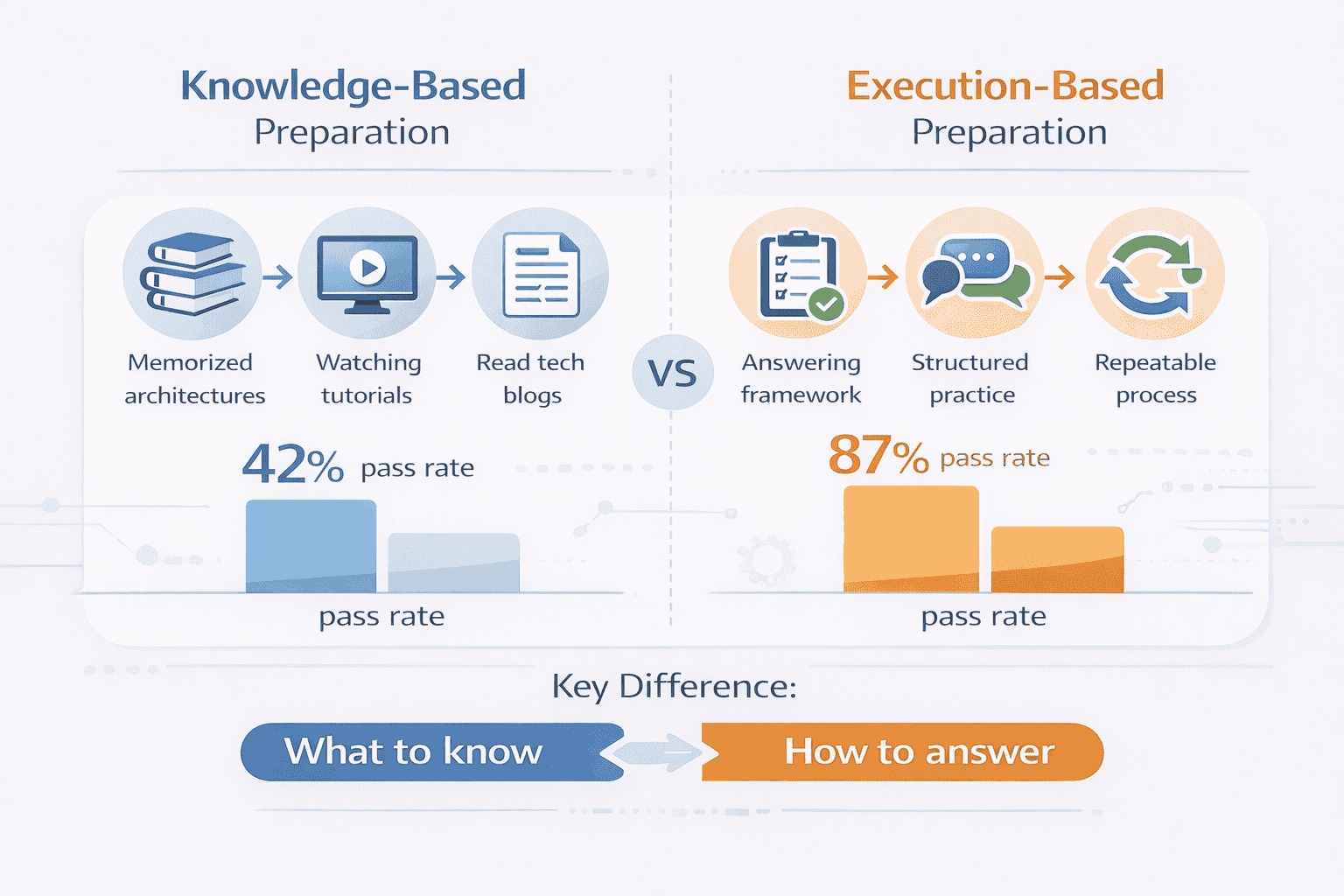

Most candidates prepare by memorizing architectures. They study Netflix’s microservices, Uber’s real-time matching, or Twitter’s feed architecture. They read engineering blogs and watch mock interviews.

Then they enter the interview room. The interviewer asks, “Design a messaging system for a mobile app.” The candidate knows about message queues, WebSockets, push notifications, and database design. But they don’t know where to start, what to cover next, or when to stop.

The Real Problem: Lack of an Answering Framework

Most system design preparation focuses on what to know. It should focus on how to answer. The difference determines whether you pass or fail.

Without a repeatable answering method, you improvise every time. You might start with database schema in one interview and API design in another. You might spend 15 minutes on caching strategies and run out of time before discussing scalability. You might forget to clarify critical requirements entirely.

Improvisation creates inconsistency. Inconsistency creates anxiety. Anxiety degrades performance.

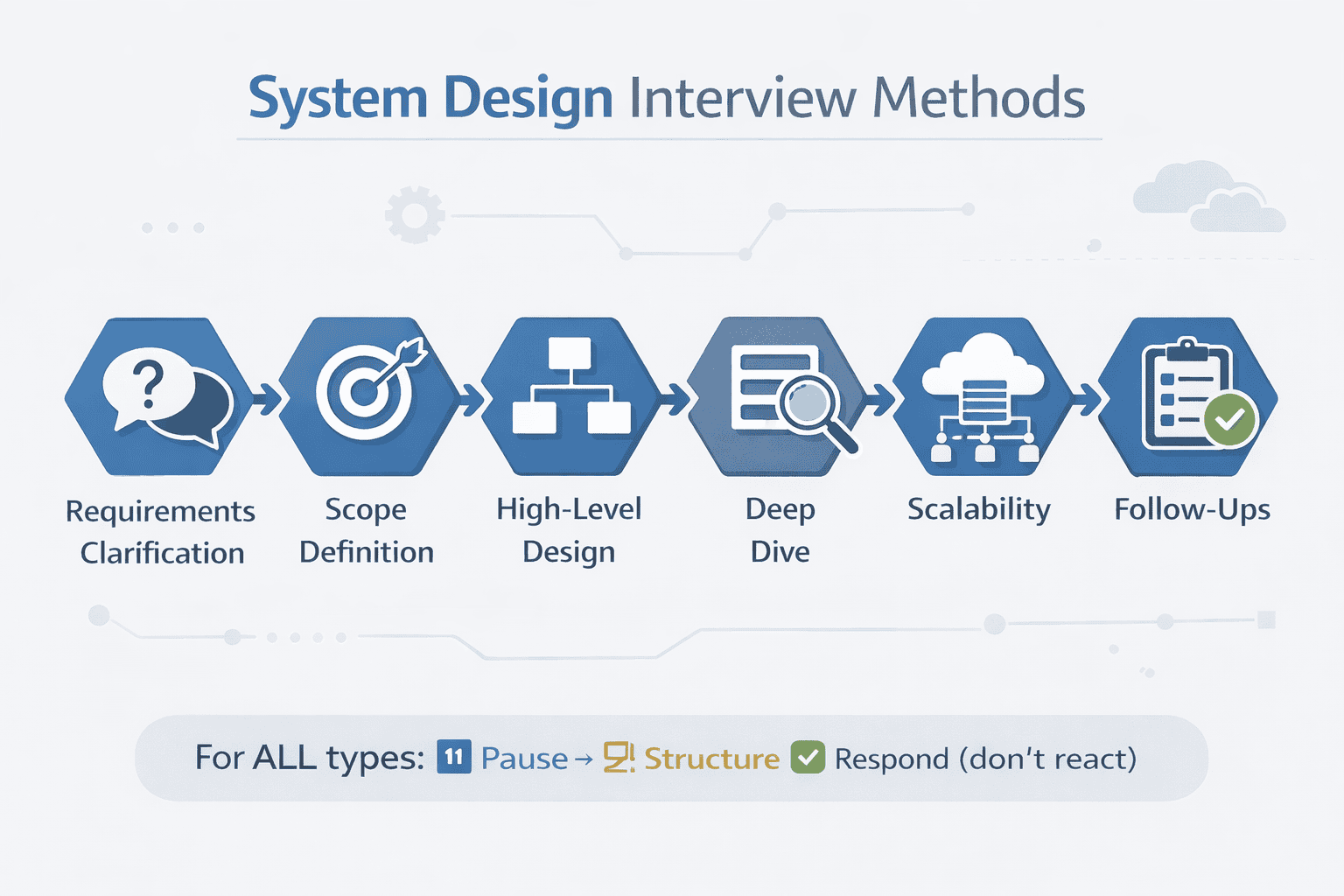

The method in this guide eliminates improvisation. You’ll learn a six-step answering framework that works regardless of the problem—URL shortener, messaging system, recommendation engine, or payment platform. The framework focuses on sequence and communication, not memorized solutions.

How This Method Differs From Traditional Preparation

Traditional preparation teaches architectures. This method teaches execution. You’ll learn when to clarify requirements, how to state assumptions clearly, which components to present first, and how to deepen selectively without losing structure.

Each step has specific signals interviewers look for. Step 1 demonstrates judgment through clarifying questions. Step 2 shows seniority through explicit assumptions. Step 3 proves communication skills through clear high-level presentation. Steps 4-6 reveal depth, prioritization, and adaptability.

By the end of this guide, you’ll have a reusable answering playbook. Not a collection of memorized systems, but a disciplined execution pattern that works across companies and interview formats.

What Makes an Answer “Interview-Safe”

Interview-safe means the answer consistently scores points regardless of the specific problem or interviewer style. It means you demonstrate the signals interviewers are trained to evaluate: structured thinking, clear communication, appropriate depth, and trade-off reasoning.

Interview-safe answers follow predictable patterns. They start with clarification, not assumptions. They present high-level design before low-level details. They address scalability systematically, not randomly. They handle follow-up questions by re-anchoring to the original design.

The six steps you’ll learn create interview-safety through consistent structure. Interviewers can follow your logic easily. They can assess your judgment clearly. They can score you fairly.

Step 1: Clarify Requirements Before Designing Anything

The first 3-5 minutes of your system design answer determine 40% of your final score. This step teaches you how to use those minutes strategically.

Most candidates hear the problem and immediately start designing. ” Design a URL shortener ” triggers architectural thinking. They mentally draft database schemas, API endpoints, and caching layers while the interviewer is still explaining the problem.

This immediate design instinct fails for two reasons. First, you’re making hidden assumptions the interviewer may not share. Second, you’re signaling that you jump to solutions without understanding requirements—a critical red flag for senior roles.

Why Clarification Comes First

Interviewers assess judgment before technical skills. Clarifying requirements demonstrates that you understand systems are built for specific use cases, not abstract problems.

When you ask clarifying questions, you’re showing the interviewer several things simultaneously. You understand that “design a URL shortener” has dozens of possible implementations depending on scale, features, and constraints. You know that assumptions must be explicit, not implicit. You approach problems systematically rather than impulsively.

Senior developers clarify. Junior developers assume. The first 90 seconds of clarification questions separate candidates more effectively than architectural diagrams.

The Three Categories of Clarifying Questions

Not all clarifying questions score equally. Focus on three categories that consistently impress interviewers: functional scope, scale and performance, and explicit exclusions.

Functional scope questions define what the system actually does. For a URL shortener: “Should the system support custom short URLs, or only auto-generated ones?” “Do we need analytics on click counts and geolocation?” “Should links expire after a certain time?”

Scale and performance questions establish the magnitude you’re designing for. “How many URL shortenings per day?” “What’s the expected read-to-write ratio?” “What are the latency requirements for redirects?”

Explicit exclusions set boundaries. “Are we designing authentication and user accounts, or assuming that’s handled separately?” “Should we worry about malicious URL detection, or is that out of scope?” “Do we need to support URL preview images and metadata?”

📊 Table: Essential Clarifying Questions by System Type

Different system design problems require different clarification patterns. This table shows the highest-value questions for common interview scenarios, organized by the evaluation signal they demonstrate to interviewers.

| System Type | Functional Scope | Scale & Performance | Explicit Exclusions |

|---|---|---|---|

| URL Shortener | Custom URLs? Analytics? Expiration? | Daily shortenings? Read/write ratio? Redirect latency? | Auth system? Malicious URL detection? Preview metadata? |

| Messaging System | 1-to-1 vs groups? Media support? Read receipts? | Messages per second? Storage duration? Concurrent users? | End-to-end encryption? Video calls? Message search? |

| News Feed | Post types? Ranking algorithm? Notifications? | Daily active users? Feed load time? Post frequency? | Ads? Content moderation? Trending topics? |

| Payment System | Currency support? Refunds? Recurring billing? | Transactions per day? Geographic distribution? Compliance? | Fraud detection? Payment methods? Reporting dashboard? |

| Video Platform | Upload quality? Live vs recorded? Recommendations? | Concurrent viewers? Video length limits? Storage needs? | Comments? Monetization? Content delivery network? |

How to Ask Questions Without Looking Clueless

There’s a skill to asking clarifying questions that demonstrate competence rather than confusion. The difference lies in how you frame the questions.

Weak framing: “What should the system do?” This signals you don’t understand the basic problem.

Strong framing: “I’m assuming we need to handle custom short URLs and basic analytics. Should I design for that, or is auto-generation sufficient?” This signals you already have a reasonable mental model and you’re validating it.

The pattern is: state a reasonable assumption, then ask if it holds. “I’m thinking 1 million shortenings per day and 10x read traffic. Does that match your expectations?” The interviewer either confirms or adjusts. Either way, you’ve shown you can estimate reasonable numbers.

When to Stop Clarifying and Start Designing

Clarification shouldn’t exceed 3-5 minutes in a 45-minute interview. You need clear signals for when you’ve clarified enough.

Stop clarifying when you can answer three questions confidently: What are the core use cases? What scale am I designing for? What’s explicitly out of scope? If you can answer these, you have sufficient clarity to proceed.

Watch for interviewer cues. If they start saying “sounds reasonable” or “let’s assume that’s out of scope for now,” they’re signaling it’s time to design. Don’t over-clarify. Moving to design shows confidence and time management.

Before proceeding to Step 2, restate the problem in your own words. “So I’m designing a URL shortener for 1 million daily shortenings, 10 million daily redirects, supporting auto-generated short codes with basic analytics. User accounts and malicious URL detection are out of scope. Is that correct?” This 10-second summary gets explicit confirmation and creates a clear foundation for the rest of your answer.

Step 2: Define Scope and Assumptions Out Loud

After clarifying requirements, explicitly state your assumptions about scale, constraints, and system boundaries . This step transforms vague understanding into concrete design parameters.

Many candidates skip this step entirely. They clarify requirements in Step 1, then jump directly to drawing architecture diagrams. The problem? All those assumptions in their head—about traffic patterns, data volume, latency requirements—remain invisible to the interviewer.

Invisible assumptions create two problems. First, the interviewer can’t evaluate whether your assumptions are reasonable. Second, when your design choices don’t align with the interviewer’s mental model, they assume you made a mistake rather than a different assumption.

Why Explicit Assumptions Signal Seniority

Junior engineers design for the problem as stated. Senior engineers design for specific constraints and explicitly state them. This distinction matters enormously in interviews.

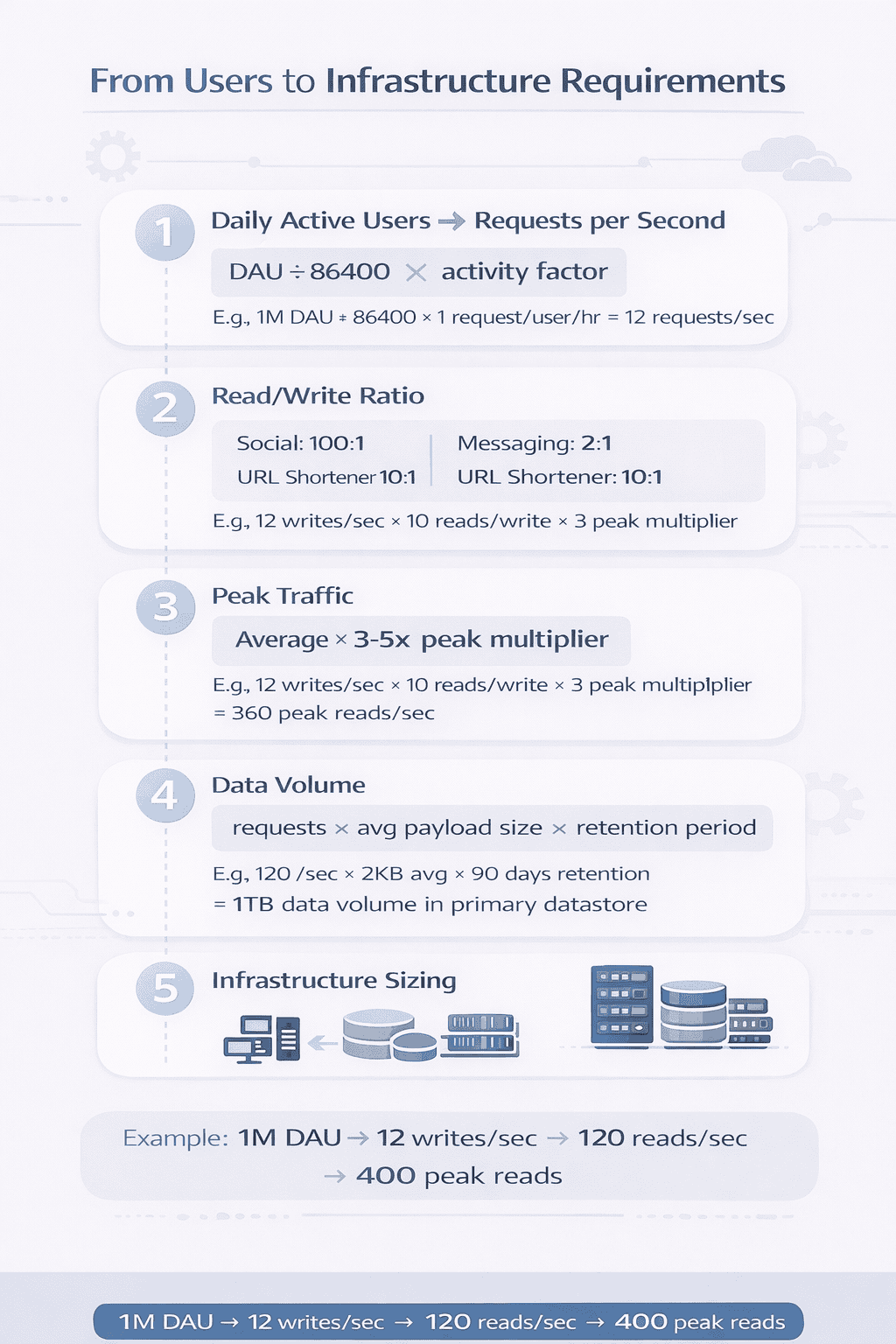

When you say out loud, “I’m assuming 1 million daily active users, which translates to roughly 12 writes per second at peak with 5x read amplification, so 60 reads per second,” you’re demonstrating several senior capabilities simultaneously.

You show you can estimate scale from user counts. You understand read/write patterns differ by orders of magnitude. You calculate peak traffic, not just averages. You make infrastructure decisions based on concrete numbers, not gut feelings.

Interviewers don’t expect perfect estimates. They expect reasonable estimates stated clearly. The clarity matters more than precision.

The Four Categories of Assumptions to State

Not every assumption deserves airtime. Focus on four categories that directly influence architectural decisions: traffic and scale, data characteristics, latency and availability, and cost constraints.

Traffic and scale assumptions establish the magnitude of your design. For a URL shortener: “Assuming 1 million daily shortenings means roughly 12 writes per second average, 40 at peak. With a typical 10:1 read-to-write ratio for shorteners, that’s 120 reads per second average, 400 at peak.”

Data characteristics assumptions shape storage decisions. “Assuming URLs average 100 characters, metadata adds another 100 bytes, we’re looking at roughly 200 bytes per record. At 1 million daily writes, that’s 200MB per day, 73GB per year raw data before any indexing.”

Latency and availability assumptions drive architecture patterns. “For URL redirects, I’m targeting sub-100ms latency at the 95th percentile and 99.9% availability. We can tolerate higher latency for the shortening operation itself—500ms would be acceptable.”

Cost constraints guide technology choices. “Assuming we want to minimize infrastructure costs while maintaining performance, I’ll prioritize efficient data structures and caching over pure horizontal scaling.”

How to Present Numbers Without Memorizing Formulas

You don’t need to memorize conversion factors or database capacity formulas. You need to show reasonable approximation skills and transparent reasoning.

Start with powers of 10 and common patterns. A day has roughly 100,000 seconds (86,400 exactly, but 100,000 is easier to calculate with). A million users making one request per day is roughly 10 requests per second. Ten million is 100 per second.

For storage, know that 1 million records at 1KB each is 1GB. 1 million at 100 bytes is 100MB. Everything else derives from these basics.

When presenting numbers, show your math out loud. “So 1 million daily URLs, divided by 100,000 seconds in a day, gives us about 10 writes per second. Let’s say 12 to be safe.” The interviewer follows your logic and can correct if your assumptions are off.

If you make a calculation error, the interviewer will often help correct it. What matters is demonstrating the reasoning process, not perfect mental math.

Pattern: The Assumption Statement Formula

Use this formula when stating assumptions: “Assuming [number/constraint], which means [implication], so I’ll [architectural decision].”

Example: “Assuming 400 peak reads per second, which means a single database server could handle this initially, so I’ll start with one primary database and add read replicas when we approach that capacity.”

The formula connects assumptions to decisions transparently. The interviewer sees your reasoning path and can challenge specific links in the chain rather than questioning your entire design.

This explicit connection-making is what separates senior candidates from junior ones. Junior candidates make decisions and hope they’re right. Senior candidates show why decisions follow from constraints.

Step 3: Present a High-Level Design First

With requirements clarified and assumptions stated, you’re ready to present architecture. This step teaches how to introduce your design at the right level of abstraction.

The most common mistake at this stage: diving too deep too quickly . Candidates start detailing database schema, specific API endpoints, or cache invalidation strategies before establishing the overall system structure.

Deep-first design creates confusion. The interviewer loses the big picture while you’re explaining indexes. They can’t assess whether your overall approach makes sense because you haven’t shown it yet.

What “High-Level” Actually Means

High-level design shows major components and their responsibilities, not implementation details. For a URL shortener, high-level means: client, API service, database, cache layer. Not: Postgres with B-tree indexes and Redis with LRU eviction.

Each component should have a clear, simple responsibility stated in one sentence. “The API service handles shortening requests and redirects.” “The cache stores frequently accessed short-to-long URL mappings.” “The database is the source of truth for all URL mappings.”

At high-level, show how components interact but not how they work internally. Draw arrows showing request flow. Label data types being passed. But don’t specify technologies, query patterns, or optimization strategies yet.

The 3-5 Component Rule

Your initial high-level design should have 3-5 major components maximum. More than five indicates you’re including implementation details that belong in the deep-dive phase.

For a URL shortener: (1) Client, (2) API Service, (3) Database, (4) Cache Layer. Four components. Each is essential. Each has a distinct responsibility. The system couldn’t function if any were removed.

If you find yourself drawing 8-10 boxes at the high level, you’re mixing abstraction layers. Collapse related components. “Authentication Service” and “Rate Limiting Service” can become “API Gateway” at high level. You’ll separate them during the deep dive if needed.

The interviewer needs to understand your overall approach in 60-90 seconds. More components means more cognitive load and longer explanation time. Keep it simple first.

📥 Download: High-Level Design Checklist

A one-page reference for validating your high-level design before diving deep. Use this checklist during practice to build muscle memory for the right level of abstraction.

Download PDFHow to Introduce Your Design

Begin with a framing statement that orients the interviewer. “I’ll start with a high-level design showing the main components, then we can dive deeper into specific areas based on your questions.”

This statement does three things. It signals you’re presenting high-level first, which shows structured thinking. It promises depth will come later, preventing the interviewer from worrying you’re staying surface-level. It invites the interviewer to guide the depth phase, showing you’re collaborative.

Then walk through your design linearly, following a request path. “When a user wants to shorten a URL, the request hits our API service. The service generates a short code, stores the mapping in the database, and returns the short URL. For redirects, the API service checks the cache first. If not found, it queries the database, updates the cache, and redirects the user.”

This request-path narration helps the interviewer follow your logic. They see data flow, understand responsibilities, and can visualize the system operating.

What Good Looks Like: The Clear Design Signal

You know your high-level design is working when the interviewer nods and says something like “makes sense so far” or “okay, I follow.” These aren’t throwaway comments. They’re signals that your communication is landing.

If instead the interviewer looks confused or asks clarifying questions about basic flow, your design isn’t clear enough. You’ve either included too much detail, used too many components, or failed to explain data flow coherently.

A good high-level design takes 2-3 minutes to present including a simple diagram. If you’re hitting 5+ minutes before finishing high-level, you’re too detailed.

The Transition to Deep Dive

After presenting high-level design, don’t immediately start diving deep. Pause and check alignment. “Does this high-level approach make sense before I go deeper into specific components?”

This pause serves two purposes. It gives the interviewer a chance to redirect if your overall approach is problematic. And it creates a natural transition point to the deep-dive phase, which follows a different pattern than high-level presentation.

The interviewer will typically either approve the approach and ask you to go deeper in specific areas, or they’ll suggest adjustments to the high-level design. Either response is valuable. Approval means your foundation is solid. Suggested changes mean you’re catching issues early before investing time in detailed design.

This structured pause demonstrates professional communication skills. You’re not bulldozing forward. You’re checking that your audience is with you before adding complexity.

Step 4: Deepen Selectively Instead of Designing Everything

After presenting high-level architecture, most candidates make a critical mistake. They try to design every component in detail before the interviewer signals interest in specific areas.

This exhaustive approach wastes time and misses the point of system design interviews. Interviewers don’t want to see you design everything. They want to see you prioritize what matters and go deep in the right places.

Selective deepening demonstrates senior judgment. It shows you understand which components are architecturally interesting, which decisions involve meaningful trade-offs , and where complexity actually lives.

How Interviewers Signal Where to Go Deep

Interviewers guide depth through questions and prompts. After you present high-level design, they’ll ask something like “How would you handle the URL generation?” or “Walk me through the caching strategy.”

These aren’t random questions. They’re deliberate signals about which areas to explore. The interviewer wants to see your reasoning about that specific component, not surface-level treatment of ten components.

If the interviewer asks about database design, that’s your cue to discuss schema, indexes, partitioning strategies, and query patterns for that component. Don’t also start detailing API endpoint structures and cache invalidation logic. Stay focused on what was asked.

When no specific guidance comes, choose one or two components that involve the most interesting trade-offs for this particular system. For a URL shortener, that’s usually the short code generation strategy and the caching layer. For a messaging system, it’s message delivery guarantees and conversation storage.

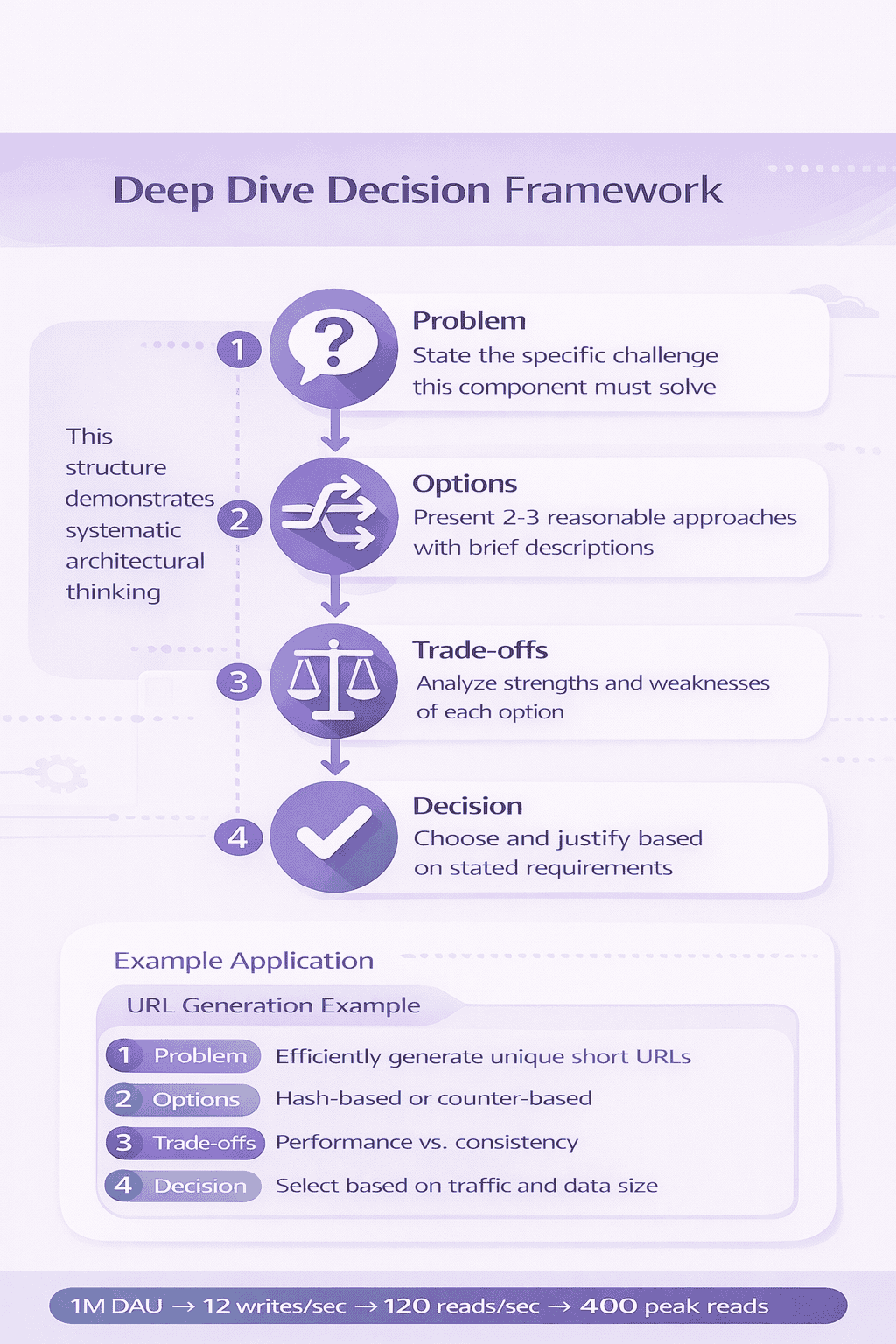

The Deep Dive Structure: Problem, Options, Trade-offs, Decision

When diving deep into any component, follow a consistent four-part structure that demonstrates architectural thinking.

Problem: State what challenge this component must solve. “For URL generation, we need a strategy that produces unique short codes while minimizing collisions and avoiding predictability.”

Options: Present 2-3 reasonable approaches. “We could use auto-incrementing IDs, random generation with collision checking, or hash-based generation from the original URL.”

Trade-offs: Analyze each option’s strengths and weaknesses. “Auto-increment is simple and guarantees uniqueness but makes URLs predictable and creates a single point of coordination. Random generation avoids predictability but requires collision checking. Hashing produces deterministic results but limits our ability to support custom short codes.”

Decision: Choose an approach and justify it based on your stated requirements. “Given our requirement for unpredictability and our scale of 1 million daily URLs where collision probability remains low, I’ll use random generation with collision checking.”

This structure demonstrates you can think through architectural decisions systematically, not just pick technologies you’re familiar with.

Common Deep Dive Topics by System Type

Different systems have predictable areas where interviewers expect depth. Knowing these patterns helps you prepare targeted deep dives.

For URL shorteners: code generation algorithms, collision handling, cache invalidation strategies, analytics tracking without impacting redirect latency.

For messaging systems: message ordering guarantees, delivery confirmation, conversation storage and retrieval, handling offline users, read receipts at scale.

For news feeds: feed generation algorithms, ranking and personalization, cache consistency across updates, handling high-volume posters, notification delivery.

For payment systems: transaction consistency, handling failures and retries, idempotency, reconciliation, fraud detection integration, compliance requirements.

For video platforms: video encoding and quality adaptation, CDN integration, thumbnail generation, playback state synchronization, recommendation system integration.

Study these patterns. When you get a problem type, you’ll immediately know which 2-3 areas deserve deep exploration.

How Deep Is Deep Enough?

A proper deep dive takes 5-8 minutes including discussion with the interviewer. If you’re finishing in 2 minutes, you’re staying too surface-level. If you’re hitting 15 minutes, you’re over-engineering.

You know you’ve gone deep enough when you’ve covered the implementation approach, discussed the primary trade-off, and mentioned at least one edge case or failure scenario. For a caching strategy: algorithm (LRU), eviction policy trade-offs (memory vs hit rate), and what happens when cache fails (fallback to database).

Watch for interviewer signals. If they say “okay, that makes sense” and don’t ask follow-ups, you’ve covered it adequately. If they keep asking “what about…” questions, they want more depth or different angles.

Don’t be afraid to ask “Would you like me to go deeper on this aspect, or should we move to another component?” This shows you’re aware of time constraints and collaborative about prioritization.

Avoid the Premature Optimization Trap

Many candidates dive deep on optimizations before establishing the basic design works. They discuss Redis cluster configurations before explaining why they need a cache. They detail database sharding strategies when the scale doesn’t warrant it.

Always establish the straightforward solution first, then discuss optimizations if relevant to your scale. “Initially, a single database instance handles our 400 peak reads per second comfortably. When we approach 1000 reads per second, we’d add read replicas. Beyond 5000 reads per second, we’d consider sharding by short code hash.”

This progression shows you understand that premature optimization wastes engineering resources. You design for current requirements with clear scaling paths.

Interviewers appreciate candidates who start simple and scale appropriately. It demonstrates production engineering judgment, not just academic knowledge.

Step 5: Address Scalability, Bottlenecks, and Trade-Offs

After establishing your design and diving deep on key components, you must demonstrate you can reason about scale systematically . This step separates senior engineers from mid-level engineers more than any other.

Scalability discussions aren’t about memorizing solutions to specific bottlenecks. They’re about showing you can identify where systems break under load, propose reasonable solutions, and articulate why those solutions work.

Many candidates approach scalability reactively. The interviewer asks “what if this grows 10x?” and they scramble to suggest solutions. Better candidates address scalability proactively after presenting their initial design.

The Systematic Bottleneck Identification Method

Don’t guess where bottlenecks might appear. Walk through your system component by component and apply increasing load mentally.

Start with your API service. “At 400 requests per second, a few application servers behind a load balancer handle this easily. At 4,000 requests per second, we’d need horizontal scaling, which our stateless design supports. Beyond 40,000 requests per second, we’d consider API gateway rate limiting and request queuing.”

Move to your database. “Our single database handles 400 reads per second comfortably. At 4,000 reads, we add read replicas. At 40,000 reads, we introduce caching for hot URLs and consider sharding if the working set exceeds available memory.”

Continue through each major component. The pattern demonstrates you think about scale quantitatively and have a mental model of when specific architectures become insufficient.

📊 Table: Scaling Thresholds and Solutions by Component

Different components hit scaling limits at predictable thresholds. This table provides rough guidelines for when specific scaling strategies become necessary, helping you reason about system evolution under increasing load.

| Component | Initial Capacity | First Scaling Action | Advanced Scaling |

|---|---|---|---|

| Application Server | ~500 req/sec per instance | Horizontal scaling + load balancer (1K-5K req/sec) | Auto-scaling, regional distribution (50K+ req/sec) |

| Database (Relational) | ~1K queries/sec single instance | Read replicas (5K-10K reads/sec) | Sharding, denormalization (100K+ queries/sec) |

| Cache Layer | ~10K ops/sec single node | Cache cluster with consistent hashing (50K ops/sec) | Multi-tier caching, regional caches (500K+ ops/sec) |

| Message Queue | ~1K messages/sec | Partitioned topics (10K msgs/sec) | Multi-cluster, geographic distribution (100K+ msgs/sec) |

| Object Storage | ~100 uploads/sec | CDN integration (1K uploads/sec) | Multi-region replication (10K+ uploads/sec) |

How to Present Trade-Offs Effectively

Every scaling decision involves trade-offs. Senior engineers acknowledge these explicitly rather than presenting solutions as purely beneficial.

Weak trade-off statement: “We’ll add caching to improve performance.”

Strong trade-off statement: “We’ll add a Redis cache layer which reduces database load and improves latency from 50ms to 5ms for cached keys. The trade-off is cache consistency—we need invalidation strategies when URLs are updated or deleted—and we add operational complexity managing another system. For our use case where URLs rarely change after creation, this trade-off favors caching.”

The strong version acknowledges benefits, costs, and why the trade-off makes sense for this specific context. It shows mature engineering judgment.

The CAP Theorem Discussion (When and How)

Many candidates force CAP theorem into every system design interview. This often backfires. CAP theorem is relevant when you’re designing distributed databases or systems with strong consistency requirements.

For a URL shortener, consistency matters during writes (no duplicate short codes) but eventual consistency works fine for reads. You might mention “we prioritize availability over immediate consistency for redirect lookups, accepting that newly created short URLs might take a few seconds to propagate through our cache cluster.”

For a banking system, you’d emphasize consistency: “We require strong consistency for account balances. We’ll accept reduced availability during network partitions rather than risk inconsistent balance states.”

Discuss CAP theorem only when the choice between consistency and availability actually matters for your design. Don’t recite definitions—apply the concepts to specific design decisions.

Failure Scenarios and Recovery Strategies

Scalability isn’t just about handling growth. It’s about staying operational when components fail. Address failure scenarios proactively.

“If our primary database fails, read replicas can serve reads immediately. Writes queue until we promote a replica to primary, which takes 30-60 seconds. For mission-critical writes, we’d implement database clustering with automatic failover.”

“If our cache cluster fails, all traffic hits the database. At our current scale, the database handles this. At 10x scale, we’d implement a second cache tier or circuit breakers to prevent database overload.”

These statements show you design for reality where components fail, not idealized scenarios where everything works perfectly.

📥 Download: System Scalability Analysis Worksheet

A one-page worksheet for systematically analyzing scalability in any system design. Use this during practice interviews to ensure you cover all scaling dimensions comprehensively.

Download PDFQuantifying Improvement Claims

Avoid vague scalability claims like “this will scale much better” or “performance improves significantly.” Quantify whenever possible.

Instead of “adding caching improves performance,” say “adding caching reduces 95th percentile latency from 50ms to 5ms for cached URLs, which represent 80% of traffic based on typical access patterns.”

Instead of “read replicas help with scale,” say “read replicas allow us to handle 10x read traffic by distributing load across 5 replica instances, though write capacity remains limited by the primary database.”

These quantified statements demonstrate you think precisely about system behavior, not just abstractly about architectures.

The 10x and 100x Mental Exercise

Even if the interviewer doesn’t ask, mentally walk through what breaks at 10x and 100x scale. This preparation lets you answer scalability questions confidently.

“At 10x our current 1 million daily URLs—so 10 million per day—our database write capacity becomes the bottleneck. We’d implement write sharding across multiple database instances. Our caching strategy remains effective since hit rates improve with scale.”

“At 100x—100 million daily URLs—we’re operating at a scale similar to Bitly. We’d need geographic distribution, multi-region databases, and CDN integration for redirects. Our basic architecture still works, but we’d add layers of optimization and distribution.”

This mental exercise shows you can project how systems evolve, not just design for current requirements.

Step 6: Handle Follow-Up Questions Without Losing Structure

The final step addresses how you respond when interviewers probe your design with follow-up questions. This phase reveals whether you truly understand your architecture or just memorized patterns.

Follow-up questions aren’t traps. They’re opportunities to demonstrate depth, adaptability, and honest reasoning. The best candidates welcome these questions because they showcase expertise.

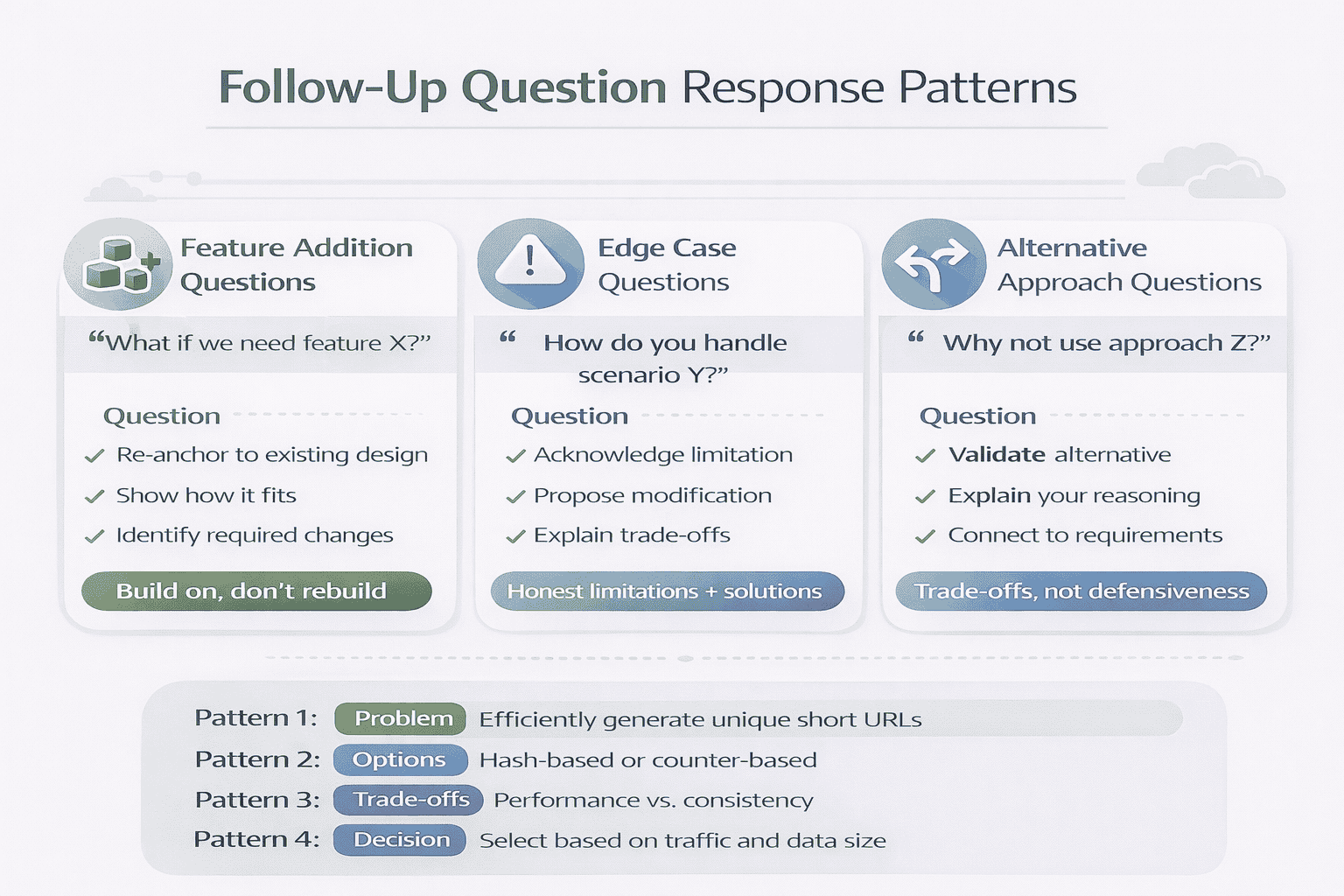

Common follow-ups include “what if we need to support feature X?”, “how would you handle edge case Y?”, or “why didn’t you consider approach Z?” Each type requires a different response pattern.

The Re-Anchor Technique for Feature Additions

When asked “what if we need analytics showing which geographic regions generate most clicks?”, don’t immediately redesign your system. Re-anchor to your existing design first.

“In our current design, we’d add a lightweight event logging layer. When the API service processes a redirect, it asynchronously publishes an event containing the short code, timestamp, and IP address. A separate analytics service consumes these events, performs GeoIP lookup, and aggregates by region.”

Notice the pattern: describe how the new feature fits into the existing architecture. If it doesn’t fit cleanly, explain what you’d need to change and why.

This approach shows you can extend designs incrementally rather than rebuilding from scratch for every new requirement.

Handling Edge Cases Honestly

If the interviewer asks “how do you handle URL shortening when your database is temporarily unavailable?”, be honest about your design’s limitations.

“In my current design, shortening operations fail when the database is unavailable since we need to persist the mapping. If this requirement matters, we could add a write-behind cache that accepts shortening requests, returns a short code immediately, and asynchronously persists to the database when it recovers. The trade-off is potential data loss if the cache fails before persisting.”

Admitting limitations while proposing solutions demonstrates maturity. Pretending your design handles everything perfectly destroys credibility.

Good candidates say “my current design doesn’t handle that well, but here’s how I’d modify it.” Great candidates add “and here’s why I didn’t include that initially”—showing they make conscious trade-offs, not oversights.

Responding to Alternative Approach Questions

When asked “why didn’t you use approach Z?”, resist defensiveness. Acknowledge the alternative’s validity, then explain your reasoning.

“You’re right that hash-based URL generation would eliminate collision checking. I chose random generation because our requirement for custom short URLs makes deterministic hashing problematic—multiple users might want to shorten the same URL with different custom codes. If custom codes weren’t required, hashing would be simpler and more efficient.”

This response shows you understand trade-offs, not that you’re attached to specific solutions. You’re optimizing for requirements, not defending choices.

The “I Don’t Know” Power Move

If the interviewer asks about a technology or pattern you’re genuinely unfamiliar with, don’t bluff. Say “I’m not familiar with that specific approach. Can you give me a quick overview?”

This honesty is powerful for two reasons. First, it’s truthful—interviewers detect bullshitting instantly. Second, it shows you’re comfortable learning in real-time, a critical senior skill.

After they explain, engage with it genuinely: “That’s interesting. It sounds similar to the approach I described, but with the advantage of X. The trade-off would be Y complexity. For our scale, my simpler approach might suffice, but at larger scale this would be worth evaluating.”

You’ve just demonstrated learning agility and comparative analysis in 30 seconds. That’s more impressive than faking knowledge.

Time Management in the Follow-Up Phase

Follow-ups can consume the last 10-15 minutes of your interview. Manage this time by giving focused, structured answers rather than rambling explorations.

Use the 30-60 second response pattern: 10 seconds acknowledging the question, 30 seconds explaining your approach, 20 seconds discussing trade-offs. If the interviewer wants more depth, they’ll ask follow-up follow-ups.

If you’re running short on time and the interviewer asks a complex question, it’s acceptable to say “that’s a great question that deserves detailed discussion. Would you like me to focus on the X aspect given our time constraint, or should I give a higher-level overview of how I’d approach this?”

This shows professional communication and respect for everyone’s time.

Knowing When You’ve Succeeded

You’ve handled follow-ups well when the interviewer’s questions become less probing and more conversational. They shift from “why did you choose X?” to “have you worked with X in production?”

This tonal shift indicates they’re satisfied with your design thinking and are now assessing practical experience. You’ve passed the system design evaluation and entered the “would I want to work with this person?” phase.

Other positive signals: the interviewer shares their own experiences with similar systems, suggests alternative approaches conversationally rather than as challenges, or asks about your timeline for starting if you got an offer.

These signs mean your structured, six-step approach worked. You demonstrated exactly what interviewers evaluate: clear communication, systematic thinking, appropriate depth, and collaborative problem-solving.

Applying the Method: URL Shortener Walkthrough

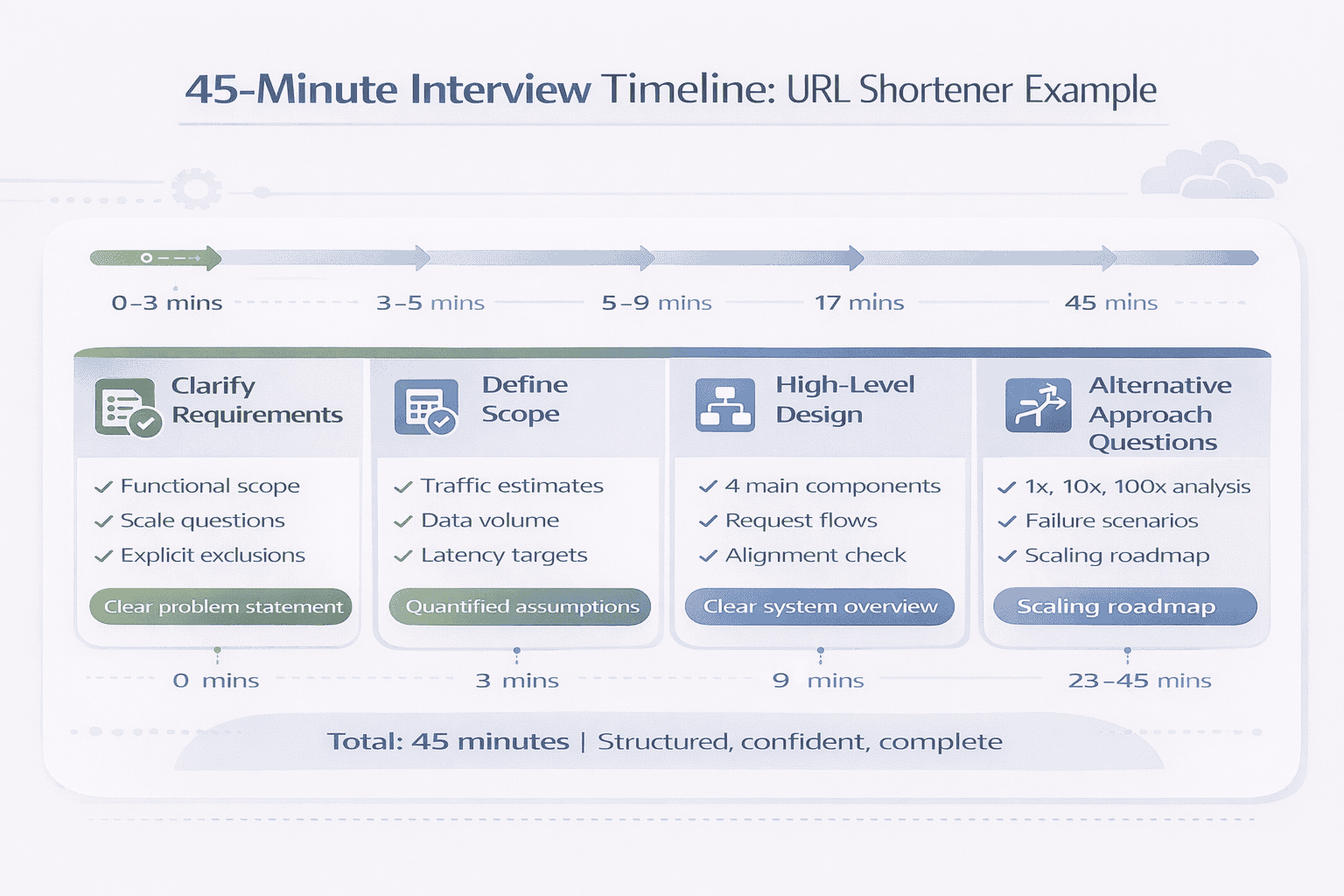

To see how these six steps work together, let’s walk through a condensed URL shortener interview using the exact method you’ve learned. This example focuses on execution flow, not implementation details.

The goal is to show you how the method creates structure and confidence, transforming a potentially anxious 45 minutes into a systematic conversation.

Step 1: Clarifying Requirements (3 minutes)

Candidate: “Before I start designing, I’d like to clarify a few things. Should the system support custom short URLs, or only auto-generated codes?”

Interviewer: “Auto-generated is fine for now.”

Candidate: “Got it. Do we need analytics like click tracking and geographic data?”

Interviewer: “Basic click counts would be good, but we can skip geographic analysis.”

Candidate: “Perfect. For scale, I’m thinking around 1 million shortenings per day. Does that sound reasonable?”

Interviewer: “Yes, that works. And assume 10x read traffic compared to writes.”

Candidate: “Great. So to summarize: auto-generated short URLs, basic analytics, 1 million daily writes, 10 million daily reads. Authentication and malicious URL detection are out of scope. Sound good?”

Interviewer: “Perfect. Go ahead.”

Notice how the candidate stated assumptions as questions, got quick confirmation, and created a clear foundation in under 3 minutes.

Step 2: Defining Scope and Assumptions (2 minutes)

Candidate: “Let me state my key assumptions. 1 million daily shortenings translates to about 12 writes per second average, maybe 40 at peak. With 10x read traffic, that’s 120 reads per second average, 400 at peak.”

“For data characteristics, assuming URLs average 100 characters and we store some metadata, that’s roughly 200 bytes per record. At 1 million daily writes, we’re looking at 200MB per day.”

“For latency, I’m targeting sub-100ms for redirects since users are waiting. Shortening can be slower—500ms is acceptable.”

Interviewer: “Those numbers look reasonable.”

The candidate demonstrated they can estimate scale and stated assumptions explicitly. The interviewer confirmed the reasoning is sound.

Step 3: High-Level Design (4 minutes)

Candidate: “I’ll start with a high-level design showing the main components, then we can dive deeper based on your questions.”

“The system has four main components: clients, an API service, a database, and a cache layer.”

“For the shortening flow: the client sends a long URL to our API service. The service generates a unique short code, stores the mapping in the database, and returns the short URL to the client.”

“For the redirect flow: the client requests a short URL. Our API service checks the cache first. If found, it redirects immediately. If not, it queries the database, updates the cache, and redirects.”

“Does this high-level approach make sense before I go deeper?”

Interviewer: “Yes, that’s clear. How would you generate the short codes?”

The candidate presented a clean high-level design in under 4 minutes, narrated request flows clearly, and paused to check alignment. The interviewer’s question signals where to go deep.

Step 4: Selective Deep Dive (8 minutes)

Candidate: “For short code generation, the challenge is producing unique codes while avoiding predictability and minimizing collisions.”

“Three main options: auto-incrementing IDs, random generation with collision checking, or hash-based generation.”

“Auto-increment guarantees uniqueness but makes URLs predictable and creates a coordination bottleneck. Random generation avoids predictability but requires collision checking. Hashing is deterministic but limits flexibility for future features.”

“Given our scale of 1 million daily URLs where collision probability remains very low, I’ll use random generation with collision checking. We generate a 6-character code from a 62-character alphabet—that’s 62^6 or about 56 billion possible codes. The probability of collision is negligible at our scale.”

Interviewer: “Makes sense. What about the database schema?”

Candidate: “Simple schema: a urls table with short_code as primary key, long_url, created_at timestamp, and click_count for analytics. The short_code index makes lookups fast. We’d add an index on created_at for analytics queries by time range.”

The candidate followed the Problem-Options-Tradeoffs-Decision structure, justified choices based on stated requirements, and addressed follow-up questions concisely.

Step 5: Scalability Discussion (6 minutes)

Candidate: “Let me walk through how this scales. At current volume—400 peak reads per second—a single database handles this comfortably. The cache layer absorbs most traffic for popular URLs.”

“At 10x scale, 4,000 reads per second, we add read replicas. Our stateless API service scales horizontally easily.”

“At 100x scale, we’re at Bitly’s level. We’d need database sharding, geographic distribution, and CDN integration for redirects.”

Interviewer: “What if the cache fails?”

Candidate: “At current scale, all traffic hitting the database directly is manageable—400 reads per second is well within a single database’s capacity. At 10x scale, we’d implement circuit breakers or a second cache tier to prevent database overload during cache failure.”

The candidate proactively addressed scalability with quantified thresholds, handled the failure scenario honestly, and scaled solutions appropriately to the problem size.

Step 6: Follow-Up Questions (remaining time)

Interviewer: “What if we need to support URL expiration?”

Candidate: “Good question. In our current design, we’d add an expires_at timestamp to the database schema. The API service checks this field during redirects. If expired, return a 404. We’d run a daily cleanup job to delete expired URLs and free up short codes for reuse.”

Interviewer: “Why not use hash-based generation instead of random?”

Candidate: “That’s a valid alternative. Hashing the long URL would be deterministic—the same long URL always produces the same short code. The advantage is eliminating collision checking. The trade-off is we couldn’t support custom codes in the future, and multiple users shortening the same URL would get the same short code, which might complicate analytics. Given we haven’t ruled out custom codes long-term, random generation preserves that flexibility.”

The candidate extended the design incrementally for new features and acknowledged alternative approaches without defensiveness, explaining trade-offs clearly.

Why This Example Worked

This interview succeeded because the candidate executed the six-step method systematically. They clarified before designing, stated assumptions explicitly, presented high-level structure first, went deep when signaled, addressed scalability proactively, and handled follow-ups by building on their existing design.

The interviewer could follow the logic easily. They could assess judgment, technical depth, and communication skills clearly. They could score the candidate fairly across all evaluation dimensions.

Most importantly, the candidate maintained control throughout. They weren’t scrambling or improvising. They were executing a practiced method that works regardless of the specific problem.

Turning Knowledge Into Interview Performance

You now have what most system design candidates lack: a repeatable answering framework that transforms technical knowledge into structured interview performance.

The six-step method isn’t about memorizing architectures. It’s about executing consistently under pressure. Clarify requirements before designing. State assumptions explicitly. Present high-level design first. Deepen selectively based on signals. Address scalability systematically. Handle follow-ups by building on your foundation.

This framework works because it aligns with how interviewers evaluate. They’re not testing whether you can recite Netflix’s microservices architecture. They’re assessing whether you can communicate clearly, think systematically, prioritize appropriately, and reason about trade-offs maturely.

Immediate Next Steps

Understanding the method isn’t enough. You need to practice executing it until it becomes automatic.

Start with one practice problem per day. Choose a system type—URL shortener, messaging app, news feed, payment platform. Set a timer for 45 minutes. Execute all six steps out loud, even when practicing alone. This builds muscle memory for the sequence and pacing.

Record yourself or practice with a peer. The framework feels different when you’re speaking it versus thinking it. You’ll discover which transitions feel awkward, which explanations ramble, and which assumptions you forget to state.

Focus on execution, not perfection. A clearly communicated straightforward design beats an poorly explained sophisticated one. Interviewers reward clarity and structure more than architectural complexity.

The Confidence Difference

The most valuable outcome of this method isn’t just better interview performance. It’s the confidence that comes from having a system.

When you walk into a system design interview knowing exactly how you’ll approach any problem, anxiety decreases dramatically. You’re not worried about where to start or what to cover next. You have a map.

That confidence shows. You speak more clearly. You think more sharply. You collaborate more naturally. Interviewers notice and respond positively.

Many candidates I’ve coached report that the interview felt more like a professional architectural discussion than an interrogation. That’s the method working. You’re demonstrating senior-level communication and structured thinking, which is exactly what the interview is designed to evaluate.

Beyond the Interview

The six-step method does more than help you pass interviews. It trains you to think about system design the way senior engineers actually work.

Clarifying requirements before designing? That’s how real projects start. Stating assumptions explicitly? That’s how senior engineers align teams. Presenting high-level design before details? That’s how architects communicate. Addressing scalability proactively? That’s production engineering discipline.

When you get the job, these same skills make you effective in design reviews, architecture discussions, and technical planning. The interview framework becomes your professional communication pattern.

Practice this method not just for interviews, but as professional development. It makes you a better systems thinker and communicator regardless of whether you’re interviewing.

When You’re Ready for More Structured Practice

If you’ve found this framework valuable and want to accelerate your preparation with comprehensive training and feedback, I’ve built something specifically for senior developers like you.

geekmerit.com provides the complete system design interview preparation built exclusively for experienced developers. The course teaches this exact six-step method through 200+ practice problems, 48 detailed video lessons, and 12 full mock interviews with scoring.

Unlike generic courses , every module assumes 5+ years of development experience and focuses on execution skills, not basic concepts you already know. You’ll get targeted feedback on where your answers lose structure, which transitions need smoothing, and how to calibrate depth for different interviewer styles.

Three learning paths fit different preparation styles: Self-Paced ($197) for disciplined learners who want all materials, Guided ($397) adding live coaching and personalized feedback, or Bootcamp ($697) with maximum support including live mock interviews and custom study plans.

Every plan includes lifetime access, all practice problems and solutions, and a 30-day money-back guarantee. View detailed pricing and curriculum .

Final Thoughts

System design interviews can feel intimidating when you’re improvising. They become manageable when you have a framework. They become opportunities to showcase your skills when that framework becomes automatic.

The six steps you’ve learned create interview-safety through consistent structure. They work for URL shorteners, messaging systems, payment platforms, video services, and any other problem type. They work across companies and interviewer styles.

You now have the execution pattern that transforms knowledge into performance. Practice it deliberately. Execute it confidently. The results will follow.

Frequently Asked Questions

How is this method different from just studying system architectures?

Studying architectures teaches you what to know. This method teaches you how to answer. Most candidates fail system design interviews not because they lack knowledge, but because they don’t know how to structure their knowledge into a clear interview answer. The six-step framework provides the sequence, pacing, and communication patterns that consistently score points.

Will this method work for different interview styles and companies?

Yes. The method is based on how interviewers are trained to evaluate candidates, not company-specific preferences. Whether you’re interviewing at FAANG companies, startups, or mid-size tech companies, interviewers assess the same core skills: clear communication, structured thinking, appropriate depth, and trade-off reasoning. The six steps demonstrate all of these regardless of the specific problem or interviewer.

How long does it take to get comfortable with this framework?

Most developers need 10-15 practice problems to internalize the six-step sequence. The first few problems feel mechanical as you consciously execute each step. By problem 10, the transitions become natural. By problem 15, you’re thinking in the framework automatically. Expect 2-3 weeks of daily practice (45 minutes per problem) to reach fluency.

What if the interviewer asks me to skip the clarification phase and just start designing?

This rarely happens, but if it does, acknowledge the request and do a compressed version. Say “Let me quickly state my key assumptions” and spend 30 seconds on critical ones—scale, core features, what’s out of scope. Then proceed to high-level design. Even compressed, this shows structured thinking and prevents misalignment later in the interview.

Should I still learn common system architectures like messaging queues and databases?

Absolutely. This framework provides execution structure, but you still need technical knowledge to fill that structure. Think of it like writing: grammar and structure are essential, but you also need vocabulary and ideas. Study common patterns and technologies, then use this method to communicate your knowledge effectively during interviews.

How do I practice this method effectively without an interview partner?

Solo practice is valuable if you do it out loud. Pick a problem, set a 45-minute timer, and execute all six steps speaking to an empty room or recording yourself. The awkwardness of talking alone is intentional—it simulates interview pressure. Review recordings to identify rambling, unclear transitions, or skipped steps. Aim for one problem daily until the framework feels automatic, then practice weekly to maintain fluency.

Citations

Content Integrity Note

This guide was written with AI assistance and then edited, fact-checked, and aligned to expert-approved teaching standards by Ram Arun . Ram has over 10 years of experience coaching software developers through technical interviews at top-tier companies including FAANG and leading enterprise organizations. His background includes conducting 500+ mock system design interviews and helping engineers successfully transition into senior, staff, and principal roles. Technical content regarding distributed systems, architecture patterns, and interview evaluation criteria is sourced from industry-standard references including engineering blogs from Netflix, Uber, and Slack, cloud provider architecture documentation from AWS, Google Cloud, and Microsoft Azure, and authoritative texts on distributed systems design.