System Design Interview Mistakes: Why Even Strong Engineers Fail

If you’ve walked out of a system design interview feeling unsettled—not because you completely failed, but because something felt off—this guide is for you. You answered the question. You explained your architecture. You used the right terminology. And yet, you sensed it slipping away.

Later, the feedback comes back vague: “You didn’t show enough structure .” “You jumped to solutions too quickly.” “We expected more clarity.”

This creates a specific kind of frustration that hits strong engineers the hardest. You know you can design systems. You do it at work. But in interviews, that competence doesn’t translate. This page exists to explain why that happens—and what to do about it.

Last updated: Feb. 2026

If the biggest gap is that you haven’t done formal system design before, start with: How to Crack System Design Interviews Without Prior Design Experience.

Table of Contents

- 1. The Frustrating Disconnect: Why Experience Doesn’t Guarantee Success

- 2. The Damaging Myth: “If I’m Experienced, I Shouldn’t Make These Mistakes”

- 3. What System Design Interviews Actually Test (And Why That’s So Frustrating)

- 4. The Most Common System Design Interview Mistakes

- 5. Why These Are Not Beginner Mistakes

- 6. How Interviewers Interpret Your Answers (Even When You Sound Smart)

- 7. The Reframe That Changes Everything

- 8. FAQs

[/raw]

The Frustrating Disconnect: Why Experience Doesn’t Guarantee Success

You’ve built production systems. You’ve handled scale, managed outages, made trade-off decisions that actually mattered. Yet here you are, preparing for system design interviews that somehow feel more difficult than the real work.

This disconnect is not in your head.

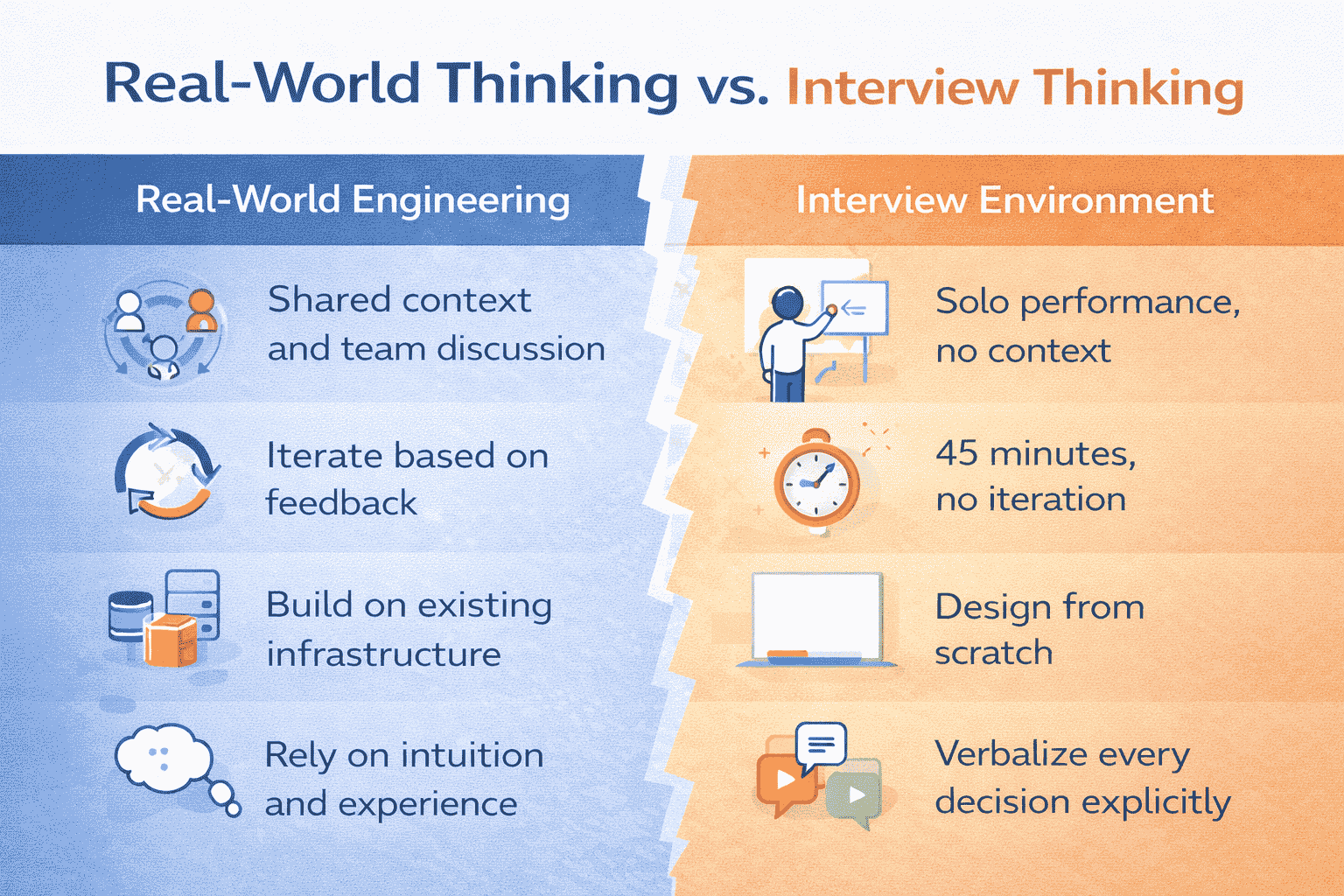

System design interviews operate under fundamentally different rules than real-world engineering. At work, you have context. You have teammates. You have time to iterate. You have existing systems to reference and build upon.

What Makes the Interview Environment Different

In a 45-minute system design interview, all of that disappears. You’re handed an ambiguous problem and expected to design a solution from scratch while simultaneously explaining your thought process to someone who’s actively looking for reasons to reject you.

The interviewer isn’t there to help you succeed. They’re there to evaluate whether your thinking process is visible, structured, and deliberate enough to trust you with production systems.

This evaluation happens whether you’ve been building distributed systems for ten years or two. Your resume got you in the room. But once the interview starts, your experience only matters if you can demonstrate it in a way the interview format rewards.

The Specific Pain Point

The most frustrating part? You often don’t know what went wrong. The feedback is vague (“We’re looking for stronger candidates”) or coded in ways that don’t help (“We wanted to see more structure”).

So you try again. Maybe you study more distributed systems concepts. Maybe you memorize more architectural patterns. Maybe you practice more mock interviews .

If your prep is mostly videos + random practice, this comparison helps you choose a better path: System Design Coaching vs YouTube for Interview Prep.

And you fail again. Not because you lack knowledge—but because you’re solving the wrong problem.

The Real Problem

Most engineers approach system design interview prep the same way they approach learning new technology: consume information, build understanding, practice application.

But system design interviews aren’t testing your ability to learn systems. They’re testing your ability to communicate about systems under artificial constraints that never exist in real work.

This is why strong engineers fail while less experienced candidates sometimes pass. The less experienced candidates aren’t better at systems—they’re better at the specific performance the interview requires.

Once you understand this distinction, everything changes. You stop trying to prove you know systems and start learning how to demonstrate structured thinking under pressure.

The Damaging Myth: “If I’m Experienced, I Shouldn’t Make These Mistakes”

One of the most damaging beliefs engineers carry into system design interviews is this: “Experience equals system design skill.”

It feels logical. After all, you’ve worked on real systems—handling scale, outages, and trade-offs that actually mattered. So when you struggle in an interview, the conclusion feels personal: “Maybe I’m not as good as I thought.”

That conclusion is wrong.

The Experience Trap

Most system design interview failures are not caused by lack of ability. They’re caused by how ability is expressed under interview conditions.

The interview rewards behaviors that are different from real-world engineering. That mismatch is where mistakes begin.

At work, when you design a system, you likely start by understanding existing infrastructure. You talk to teammates. You review documentation. You prototype in a safe environment. You iterate based on real feedback from real users.

Why Experience Can Work Against You

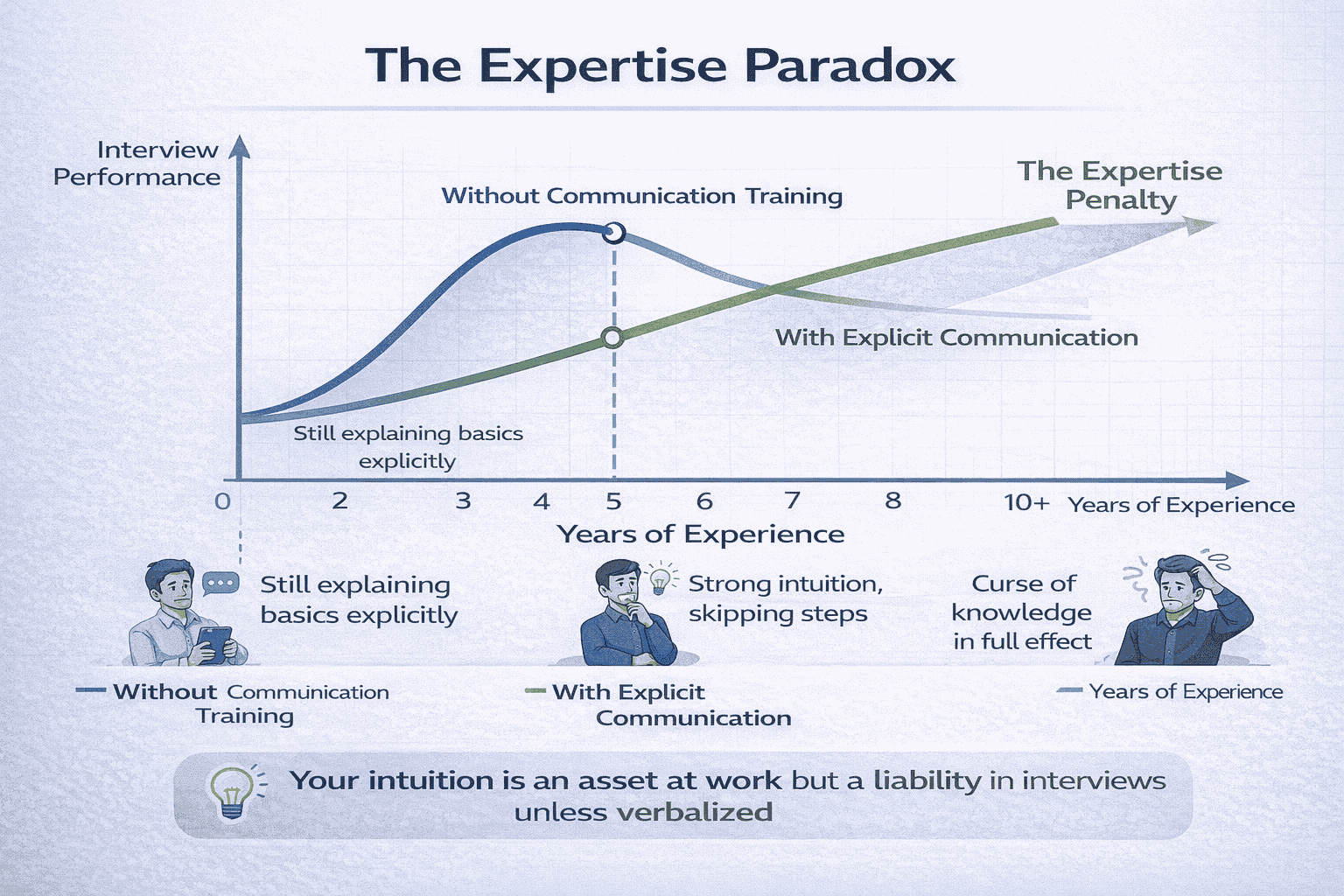

Experienced engineers have built strong intuition. Intuition is fantastic for shipping products. It’s terrible for interviews that demand explicit reasoning at every step.

When you say “We should use a message queue here,” an experienced engineer might understand the ten unstated assumptions behind that choice. An interviewer doesn’t.

They need to hear: “Given our requirement for asynchronous processing and the potential for traffic spikes, a message queue lets us decouple the API from background workers. This means if our job processors slow down, we don’t block user requests. The trade-off is added complexity and potential message ordering challenges.”

The Personal Cost of This Myth

When experienced engineers fail interviews, they internalize it differently than junior engineers do.

A junior engineer might think: “I need to learn more.” An experienced engineer thinks: “I’m not as good as I thought.”

The second thought is more damaging because it’s harder to recover from. It creates doubt in abilities you’ve already proven in production.

But here’s the truth: Your production experience is valid. Your skills are real. The problem isn’t you—it’s the mismatch between how you’ve learned to work and what the interview artificially requires.

Breaking Free From the Myth

The first step to better interview performance is rejecting this myth entirely.

You don’t need to question your abilities. You need to understand that system design interviews are a specific skill—one that’s only tangentially related to system design ability.

Think of it like this: Being a great basketball player doesn’t automatically make you great at basketball video games. The skills overlap, but the performance requirements are different.

Once you stop taking interview failures personally, you can start addressing them systematically.

What System Design Interviews Actually Test (And Why That’s So Frustrating)

At work, system design is messy, collaborative, and iterative. Context is shared. Requirements evolve. Decisions are refined over time.

Interviews strip all of that away.

The Four Core Evaluation Criteria

System design interviews test how you reason when information is incomplete. They test how you structure thoughts under time pressure. They test how clearly you explain decisions and trade-offs. They test how disciplined your thinking remains without context.

Notice what’s missing from that list: They are not testing whether you’ve “built this before.”

This distinction explains why strong engineers fail while less-experienced candidates sometimes pass.

Criterion 1: Structured Problem Decomposition

Interviewers want to see that you can break down ambiguous problems systematically.

At work, you might jump directly to familiar patterns because you’ve solved similar problems before. In interviews, that looks like skipping steps.

What interviewers expect: “Let me start by clarifying the requirements. Are we designing for 100 users or 100 million? What’s our consistency requirement—can we accept eventual consistency or do we need strong consistency? What’s the expected write-to-read ratio?”

What many engineers say: “Okay, so we’ll need a load balancer, an API layer, a caching tier, and a database cluster.”

The second approach might be technically correct. But it signals that you make assumptions without validation—a risk factor in any engineering organization.

Criterion 2: Explicit Trade-Off Discussion

Every architecture decision involves trade-offs. Strong engineers understand this instinctively. But interviews require you to verbalize those trade-offs explicitly.

Interviewers aren’t just listening for the right answers. They’re listening for evidence that you’ve considered alternatives and can articulate why you chose what you chose.

“We’ll use Redis for caching” is incomplete. “We’ll use Redis for caching because we need sub-millisecond read latency and can accept eventual consistency. The trade-off is that we need to handle cache invalidation complexity and potential data loss on cache eviction” is complete.

The second version shows judgment. The first version shows familiarity with tools.

Criterion 3: Clear Communication Under Pressure

System design interviews are fundamentally communication tests disguised as technical tests.

Your ability to explain complex systems clearly matters more than your ability to design complex systems correctly. This feels backwards to most engineers, but it reflects a real workplace need.

At senior levels, you’re not just writing code. You’re influencing architecture decisions across teams. You’re explaining trade-offs to stakeholders. You’re documenting systems for future maintainers.

If you can’t clearly articulate your thinking in an interview, interviewers assume you can’t do it in design docs, architectural review meetings, or incident postmortems either.

📊 Table: What Interviewers Actually Evaluate

This table breaks down the gap between what engineers think is being tested versus what interviewers actually care about. Understanding this distinction is the first step toward better performance.

| What Engineers Think Is Tested | What Interviewers Actually Test | Why This Matters |

|---|---|---|

| Knowledge of distributed systems patterns | Ability to explain why a pattern fits the problem | Knowing patterns is table stakes; demonstrating judgment is the differentiator |

| Correctness of final architecture | Quality of reasoning that led to the architecture | Real systems evolve; your thinking process is what scales |

| Speed of arriving at a solution | Structured approach even when uncertain | Calm problem-solving under ambiguity is more valuable than quick answers |

| Technical depth in specific areas | Breadth of considerations (security, cost, ops, monitoring) | Senior engineers see the whole system, not just the happy path |

| Perfect solution that handles every edge case | Pragmatic solution with acknowledged limitations | Production systems have constraints; ignoring them signals inexperience |

Criterion 4: Graceful Handling of Uncertainty

Perhaps the most important criterion: How you behave when you don’t know the answer.

Strong engineers are used to being right. Interviews intentionally push you into territory where you might be wrong.

The interviewer asks: “How would you handle a sustained 10x traffic spike during Black Friday?” You haven’t designed for that scale before. What do you do?

Many engineers try to fake confidence. They propose solutions they’re not sure about without acknowledging uncertainty. This backfires immediately.

Better approach: “I haven’t designed for that exact scale, but here’s how I’d think through it. First, I’d want to understand our bottlenecks through load testing. Based on typical web architectures, I’d expect the database to be the constraint before the API layer. So I’d explore…”

This shows structured thinking even in unfamiliar territory. That’s what interviewers want to see.

.

The Most Common System Design Interview Mistakes

Most candidates don’t fail because they say something obviously wrong. They fail because of subtle but repeatable patterns.

These patterns feel reasonable—especially if you’ve built systems in production. But in interviews, they are interpreted as risk signals, not strengths.

Mistake 1: Jumping Straight to Architecture

The interviewer asks: “Design a URL shortener.” You immediately start: “We’ll need a REST API, a database to store mappings, a cache layer…”

It feels efficient. You’re demonstrating technical knowledge right away. But interviewers see skipped reasoning.

What’s missing? Requirements clarification. Scale estimation. Success criteria definition. Use case prioritization.

When you skip these steps, the interviewer can’t evaluate whether your architecture actually solves the right problem. More importantly, they assume you’d skip these steps at work too.

Why This Happens to Strong Engineers

At work, when your product manager says “We need a URL shortener,” you likely have context. You know your user base. You know your infrastructure. You know your team’s capabilities.

That context lives in your head, so you skip to solutions. In interviews, that context doesn’t exist. The interviewer needs to see you build it from scratch.

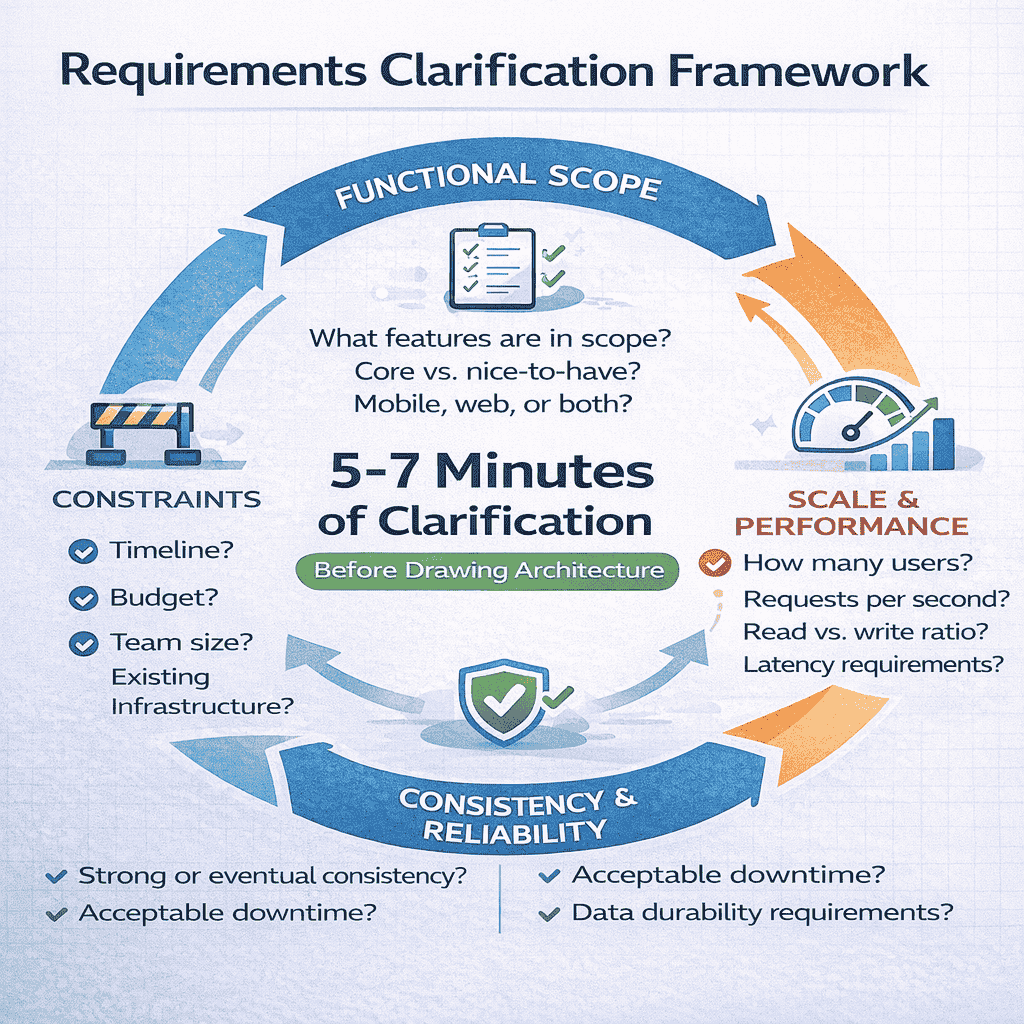

The fix: Always spend the first 5-7 minutes clarifying before you draw anything. Ask about scale, consistency requirements, read-write ratios, expected user behavior, and success metrics.

Mistake 2: Not Clarifying Requirements

This mistake is the root cause of Mistake 1, but it deserves its own discussion because it manifests in multiple ways.

What seems obvious to you looks like assumption-making to interviewers.

Example: “Design Instagram.” Do they mean the feed? The upload pipeline? The recommendation engine? All of it? Without asking, you can’t know.

Even worse: You might assume certain requirements that the interviewer wasn’t thinking about. Then your entire design solves a different problem than what they had in mind.

Mistake 3: Designing for Scale Too Early

You want to impress the interviewer with your distributed systems knowledge. So when designing a simple feature, you immediately propose: “We’ll need a distributed cache with consistent hashing, a message queue for async processing, and a microservices architecture.”

This signals poor prioritization.

Good engineers optimize for the actual problem. If the system needs to handle 100 requests per second, proposing architecture for 100,000 requests per second shows you can’t distinguish important concerns from premature optimization.

Interviewers interpret this as: “This person will over-engineer everything and waste team resources.”

The Right Way to Handle Scale

Start with the simplest architecture that meets requirements. Then explicitly state: “For the initial requirements of X users and Y requests per second, this architecture is sufficient. If we needed to scale to Z, here’s what would break first and how I’d address it.”

This demonstrates that you can prioritize and that you understand incremental improvement—both critical for senior engineers.

If you’re interviewing for a company known for massive scale (Google, Meta, Amazon), they’ll tell you to design for scale. Listen for that signal.

Mistake 4: Treating System Design Like a Coding Problem

You dive into implementation details: “The hash function will use MD5, then we’ll take the first 7 characters…” or “We’ll use a B+ tree index on the timestamp column…”

These details feel productive. You’re being specific. But you’re operating at the wrong level of abstraction.

System design interviews care about architecture, not algorithms. They want to see high-level component interaction, data flow, and trade-off analysis.

When you focus on implementation details without establishing high-level clarity first, the interview feels directionless.

Finding the Right Abstraction Level

Think boxes and arrows, not code. Think “API layer talks to cache, falls back to database” not “we’ll implement a write-through cache with a TTL of 3600 seconds.”

The exception: If the interviewer explicitly asks you to dive deeper into a specific component, then implementation details become relevant.

But even then, start high-level. Let them pull you into depth rather than diving there yourself.

Mistake 5: Skipping Explicit Trade-Offs

This is perhaps the most damaging mistake because it’s the hardest to recognize.

You propose: “We’ll use a NoSQL database.” The interviewer waits for more. You move on to the next component.

What just happened? You made a technology choice without explaining your reasoning. The interviewer now has no way to evaluate your judgment.

Maybe NoSQL is the right choice. Maybe it’s not. Without hearing your trade-off analysis, the interviewer has to assume you’re just naming technologies you’ve heard of.

📊 Table: Common Mistake Patterns and Corrections

This reference table helps you recognize these mistakes in real-time during interviews and provides the correction to apply immediately.

| Mistake Pattern | What It Signals to Interviewers | The Correction |

|---|---|---|

| Starting with “We’ll need a load balancer…” | Assumes requirements, skips problem understanding | “Let me first clarify: What’s our expected scale? What’s the read-write ratio?” |

| Proposing microservices for everything | Over-engineers, can’t prioritize | “For initial scale, a monolith works. Here’s when I’d break it apart…” |

| Choosing tech without explaining why | Lacks judgment, parrots buzzwords | “I’m choosing X because [requirement]. The trade-off is [consequence].” |

| Diving into algorithm details early | Wrong abstraction level, misses big picture | “Let me first map out the main components and data flow…” |

| Going silent while thinking | Can’t communicate under pressure | “I’m thinking through how to handle writes at this scale… let me compare two options…” |

| Ignoring monitoring, security, costs | Only sees happy path, not production reality | “For monitoring, we’d track [metrics]. Security-wise, we need [controls]…” |

The Trade-Off Template

Every significant decision should follow this pattern: “I’m choosing [X] because it gives us [benefit]. The trade-off is [cost/limitation]. The alternative would be [Y], which offers [different benefit] but at the cost of [different limitation].”

This template forces you to demonstrate judgment at every decision point.

Example: “I’m choosing a relational database because we need strong consistency for financial transactions. The trade-off is that horizontal scaling is more complex than with NoSQL. The alternative would be a distributed NoSQL database like Cassandra, which scales horizontally easily but makes strong consistency harder to guarantee.”

Notice how this version shows you’ve considered multiple options and can articulate the implications of your choice.

Why These Mistakes Cluster Together

These five mistakes rarely occur in isolation. They feed into each other.

When you skip requirements clarification (Mistake 2), you jump straight to architecture (Mistake 1). When you focus on proving your knowledge, you over-engineer for scale (Mistake 3). When you’re anxious about the interview, you dive into comfortable implementation details (Mistake 4). And when you’re moving too fast, you forget to explain trade-offs (Mistake 5).

Understanding this clustering is important because fixing one mistake often prevents the others.

If you commit to spending 5-7 minutes on requirements clarification, you naturally avoid jumping to solutions. If you commit to explaining trade-offs, you naturally slow down and think more carefully.

Why These Are Not Beginner Mistakes

Here’s the uncomfortable truth: Experienced engineers are more likely to make these mistakes than juniors.

This seems backwards. How can someone with ten years of production experience be worse at interviews than someone with two years?

Experience Builds Intuition. Interviews Demand Explicit Reasoning.

The more systems you’ve built, the more patterns you recognize instantly. Pattern recognition is incredibly valuable at work. In interviews, it becomes a liability.

A junior engineer doesn’t have strong intuition yet. When asked to design a system, they have to think through every step explicitly. This thinking-out-loud process is exactly what interviews reward.

An experienced engineer sees the problem and immediately knows the solution. But “knowing” the answer isn’t enough. You have to show the work.

The Curse of Expertise

Psychologists call this the “curse of knowledge.” Once you know something well, it’s hard to remember what it’s like not to know it. You skip steps that seem obvious to you but aren’t obvious to observers.

At work, this curse is usually manageable because your teammates have similar context. In interviews, your “obvious” skip looks like a gap in reasoning.

Interviewer: “Why did you choose a write-ahead log here?”

Experienced engineer: “For durability.” (Thinks: Obviously for durability, what else would it be for?)

Junior engineer: “A write-ahead log ensures that even if the system crashes after acknowledging a write but before persisting to the main storage, we can replay the log on recovery. This gives us durability guarantees at the cost of slightly increased write latency.”

Same knowledge. Different communication. The junior gets credit for better answers because they externalized their reasoning.

Real-World Context Doesn’t Transfer

At work, you rely on shared context constantly. When you propose a solution, your teammates understand the constraints you’re working within. They know your infrastructure, your team’s velocity, your budget limitations.

In interviews, none of that exists. Every decision needs explicit justification because the interviewer has no context.

This context gap hits experienced engineers harder because they’re more accustomed to relying on it. A junior engineer hasn’t built up that dependency yet.

Production Experience Creates Different Habits

At work, good engineers move fast. They prototype, gather feedback, iterate. “Fail fast” is a virtue.

In interviews, moving fast without showing your reasoning process looks like carelessness.

At work, good engineers reuse proven patterns. “Don’t reinvent the wheel” is sensible advice.

In interviews, reaching for patterns without explaining why they fit looks like you’re mechanically applying solutions without thinking.

At work, good engineers make pragmatic trade-offs quickly based on intuition built from past experiences.

In interviews, making quick decisions without verbalizing the trade-off analysis looks like you’re not considering alternatives.

See the pattern? The habits that make you effective at work actively hurt your interview performance.

The Confidence Gap

Experienced engineers carry confidence into interviews. This confidence manifests as: “I’ve built this before, I know how to do this.”

That confidence can make you less careful about explaining your reasoning. After all, you’re certain you’re right.

Junior engineers, lacking that confidence, compensate by being more explicit and careful. Ironically, this cautiousness looks like stronger reasoning to interviewers.

The most dangerous phrase in system design interviews: “Obviously, we should…”

Nothing is obvious to the interviewer. The moment you say “obviously,” you’ve just skipped the reasoning they wanted to evaluate.

Breaking Free From Experience-Based Mistakes

The solution isn’t to suppress your experience. The solution is to externalize your internal reasoning process.

Think of it as debugging your own thoughts out loud. When you’re solving a production issue, you might work through possibilities in your head. In interviews, do that same process out loud.

“I’m considering two approaches here. Option A gives us better write performance but complicates reads. Option B simplifies reads but means writes need more coordination. Given our requirement for read-heavy traffic, I’m leaning toward Option B, but let me think through the implications…”

This isn’t padding or showing off. It’s making your expertise visible so it can be evaluated.

How Interviewers Interpret Your Answers (Even When You Sound Smart)

Interviewers aren’t listening for perfect architectures. They’re listening for signals about how you think and work.

Understanding what they’re actually evaluating changes everything about how you prepare.

The Interview Is a Simulation of Working Together

When an interviewer asks you to design a system, they’re not really asking “Can you design this system?” They’re asking: “What would it be like to work with you on a complex project?”

Every behavior you exhibit gets mapped to a workplace scenario.

When you jump to solutions without clarifying requirements, they think: “This person will build things we didn’t ask for.”

When you skip trade-off discussions, they think: “This person won’t consider operational costs or maintenance burden.”

When you go silent while thinking, they think: “This person won’t communicate during critical incidents.”

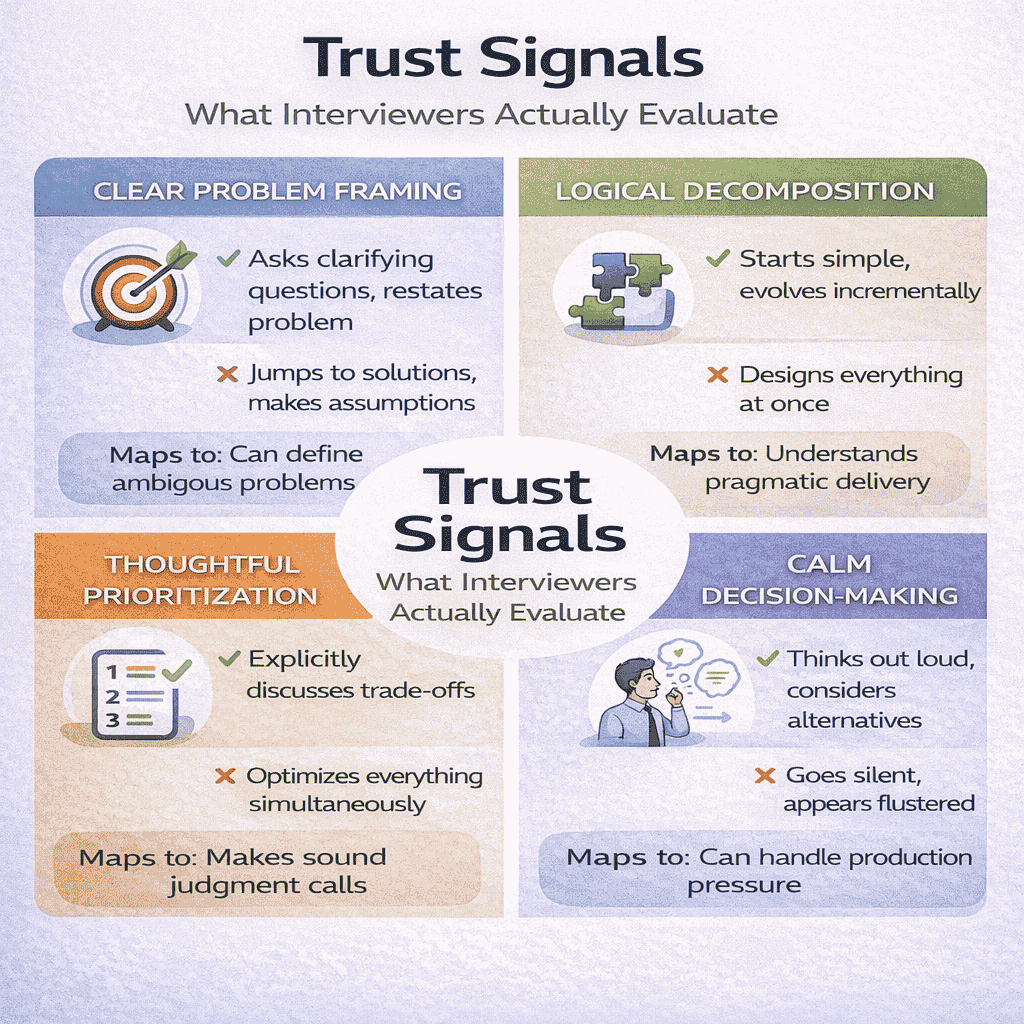

The Four Trust Signals Interviewers Need

Every answer you give is being evaluated against four questions. These questions determine whether you pass or fail, regardless of technical correctness.

Trust Signal 1: Clear Problem Framing

Can you take an ambiguous problem and define it precisely?

At senior levels, most problems start ambiguous. “Our checkout page is slow” could mean anything. “Users are complaining about the feed” doesn’t specify which users or what aspect of the feed.

Strong engineers clarify before they act. Weak engineers make assumptions and build the wrong thing.

In interviews, this shows up as: Do you ask clarifying questions? Do you restate the problem back to confirm understanding? Do you identify constraints and success criteria upfront?

If you skip this, the interviewer thinks: “This person will waste weeks building features nobody asked for.”

Trust Signal 2: Logical Decomposition

Can you break down complex systems into manageable pieces?

Large systems are built incrementally. Nobody designs everything at once. Strong engineers know how to identify the core problem, solve that first, then layer additional complexity.

In interviews, this shows up as: Do you start with a simple architecture that works, then evolve it? Or do you try to design everything simultaneously?

When you propose ten microservices immediately, the interviewer thinks: “This person doesn’t understand incremental delivery.”

When you start with a monolith and explain when you’d break it apart, the interviewer thinks: “This person understands pragmatic engineering.”

Trust Signal 3: Thoughtful Prioritization

Can you distinguish between critical and optional concerns?

Production systems operate under constraints. Time, budget, team size, existing infrastructure—every decision involves trade-offs.

Strong engineers optimize for the actual constraints. Weak engineers try to optimize everything simultaneously.

In interviews, this shows up as: Do you discuss trade-offs explicitly? Do you acknowledge what you’re not optimizing for? Do you explain why certain concerns take priority over others?

When you propose solutions without discussing what you’re sacrificing, the interviewer thinks: “This person doesn’t understand resource constraints.”

When you say “For the MVP, we’ll accept eventual consistency to ship faster, but here’s how we’d add strong consistency later if needed,” the interviewer thinks: “This person makes sound judgment calls.”

Trust Signal 4: Calm Decision-Making Under Uncertainty

Can you make progress when you don’t have all the answers?

Production engineering is full of uncertainty. New requirements emerge. Systems behave unexpectedly. Third-party services fail. Strong engineers stay calm and systematic.

In interviews, this shows up as: Do you think out loud when uncertain? Do you propose reasonable approaches even when you haven’t solved the exact problem before? Or do you freeze or become flustered?

When you go silent for two minutes, the interviewer thinks: “This person will panic during incidents.”

When you say “I haven’t designed for this specific scale before, but let me think through potential bottlenecks systematically,” the interviewer thinks: “This person can handle production pressure.”

How “Smart” Answers Can Still Fail

This is the most counterintuitive part: You can propose technically excellent solutions and still fail the interview.

Example: You design a sophisticated distributed caching system with consistent hashing, read replicas, and write-ahead logging. Technically impressive. But if you didn’t explain why each component was necessary, the interviewer can’t evaluate your judgment.

They’re left wondering: “Did this person carefully analyze trade-offs? Or did they just throw every distributed systems concept they know at the problem?”

Without seeing your reasoning process, they have to assume the latter. That assumption fails you.

The Narrative Problem

Great answers tell a story. The story goes: “Here’s the problem. Here’s why it’s challenging. Here’s my approach. Here’s why I chose this over alternatives. Here are the implications.”

Most engineers skip the story and jump to the solution. The interviewer loses the thread.

They can’t follow your logic because you didn’t make it visible. They can’t evaluate your judgment because you didn’t demonstrate the decision-making process.

This is why communication matters as much as technical knowledge. The best solution in the world is worthless if the interviewer can’t understand how you arrived at it.

📥 Download: Interview Self-Assessment Checklist

Use this checklist after mock interviews or real interviews to identify which trust signals you’re missing. Pinpoint exactly where your communication breaks down and what to practice next.

Download PDFWhen Interviewers Ask Follow-Up Questions

Pay attention to what interviewers ask about. Their questions reveal what’s missing from your answer.

If they ask “Why did you choose that database?” it means you didn’t explain your reasoning.

If they ask “How would this handle failure?” it means you forgot to discuss reliability.

If they ask “What about costs?” it means you’re not considering operational constraints.

Strong candidates preempt these questions. They address trade-offs, failure modes, and operational concerns proactively.

When you force the interviewer to ask basic follow-ups, it signals you weren’t thinking comprehensively. When you address these concerns upfront, it signals mature engineering thinking.

The Calibration Problem

Different interviewers calibrate differently. What one interviewer considers “sufficient detail” another considers “too surface-level.”

This inconsistency is frustrating, but you can mitigate it by reading the room.

If the interviewer keeps asking you to go deeper, slow down and add more detail. If they’re trying to move you along, speed up and stay higher-level. If they look confused, pause and ask “Does this approach make sense so far?”

The interview is a conversation, not a monologue. Adjust to feedback in real-time.

Engineers who treat the interview as a fixed performance often miss these calibration signals. Engineers who treat it as a collaborative design session naturally adjust their communication.

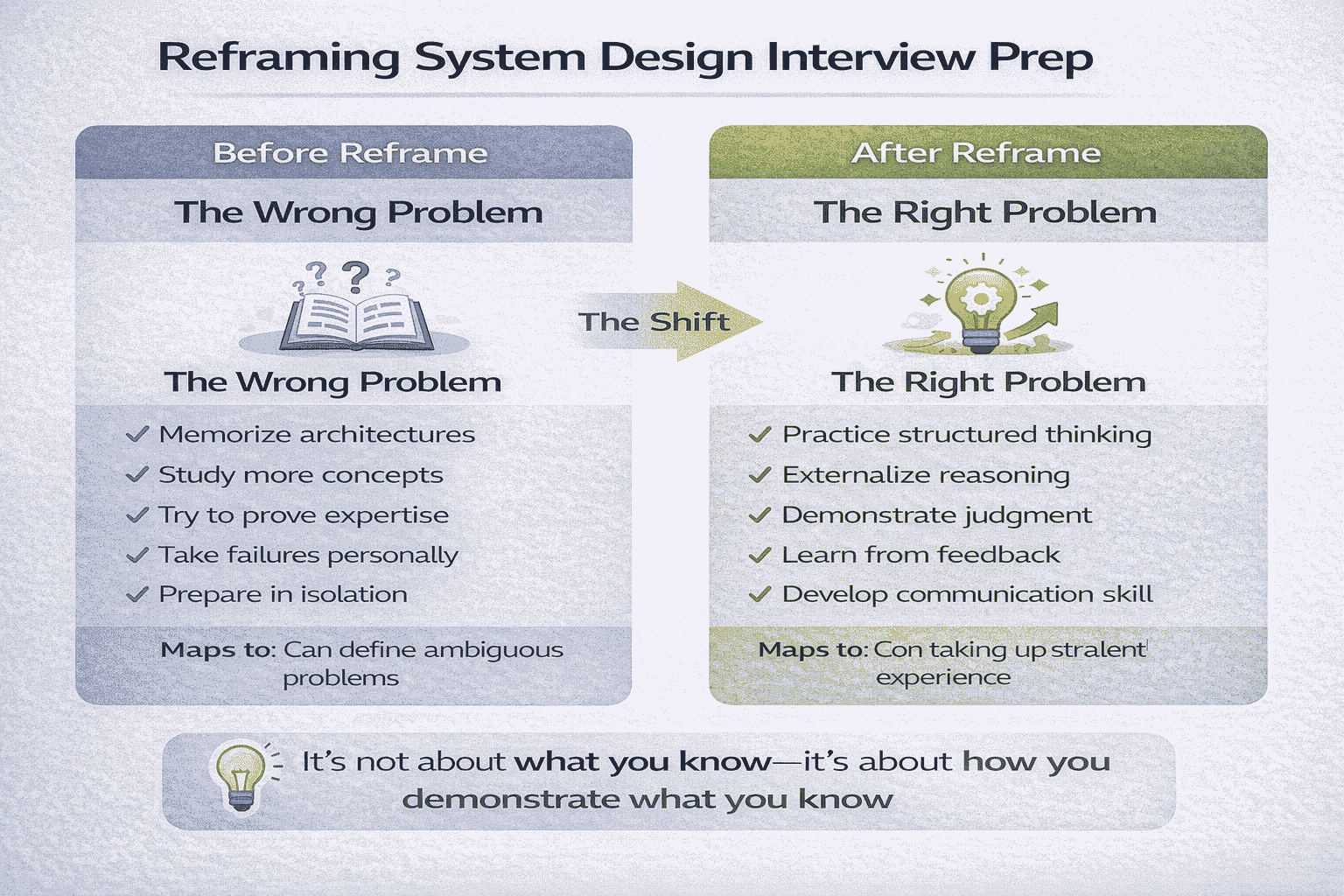

The Reframe That Changes Everything

System design interview mistakes are not a character flaw. They’re not a sign you lack experience. They’re not evidence that you’re not as good as you thought.

They are a sign that you’re solving the wrong problem.

The Problem You Think You’re Solving

Most engineers prepare for system design interviews by studying distributed systems concepts. They learn about CAP theorem, consistent hashing, consensus algorithms, message queues, and caching strategies.

This knowledge is necessary. But it’s not sufficient.

The problem isn’t that you don’t know these concepts. The problem is that you’re treating the interview as a knowledge test when it’s actually a communication and judgment test.

The Problem You’re Actually Solving

System design interviews test whether you can demonstrate structured thinking under pressure. They test whether you can make your reasoning visible. They test whether you can communicate complex trade-offs clearly.

Once you reframe the problem this way, preparation becomes precise.

You stop trying to memorize every possible system architecture. You start practicing how to think out loud systematically. You stop trying to impress with complexity. You start demonstrating judgment through clear trade-off analysis.

What Changes After the Reframe

After this reframe, everything about interview prep shifts.

You stop studying in isolation and start practicing with feedback. You stop trying to design perfect systems and start focusing on clearly explaining good-enough systems. You stop treating interviews as pass-fail tests and start treating them as skills to develop.

Most importantly: You stop taking failures personally.

When you understand that interview performance is a separate skill from engineering ability, failed interviews stop feeling like indictments of your competence. They become data points showing where your communication needs refinement.

The Path Forward

Now that you understand what’s actually going wrong, you can address it systematically.

The mistakes you’re making aren’t mysterious. They’re predictable and correctable. You’re jumping to solutions because you haven’t practiced requirements clarification. You’re skipping trade-offs because you haven’t internalized the trade-off template. You’re designing for the wrong scale because you haven’t practiced prioritization frameworks.

Each mistake has a corresponding fix. Each fix can be practiced deliberately.

From Diagnosis to Solution

This page diagnosed the problem. But diagnosis alone doesn’t fix it.

The next step is systematic practice with the right frameworks. Not more studying—practice. Not more mock interviews without structure—deliberate practice targeting specific weaknesses.

Strong engineers who struggle with system design interviews need three things: A clear framework for structuring answers. Feedback identifying specific communication gaps. Repetition to internalize new habits.

Understanding the mistakes is the foundation. Building the skills to avoid them is the next step.

If you’re a senior developer or architect preparing for FAANG-level interviews, System Design Course for Senior .NET Developers provides the structured framework and coaching that transforms understanding into performance. The program includes proven templates for requirements clarification, trade-off analysis frameworks, and mock interviews with feedback targeting the exact gaps this article identified.

Why This Matters Beyond Interviews

The communication skills that help you pass system design interviews also make you a better engineer.

Externalizing your reasoning helps teammates understand your designs. Explicitly discussing trade-offs improves architectural review meetings. Practicing structured thinking under pressure prepares you for incident response.

These aren’t just interview skills. They’re senior engineering skills that interviews happen to test.

So even if you’re not interviewing right now, developing these capabilities makes you more effective at work. You become someone who can communicate complex technical decisions clearly. Someone who can lead design discussions productively. Someone who can mentor junior engineers effectively.

The interview is just the forcing function that makes these skills visible.

Final Thought: You’re Not Broken

If you walked into this article feeling frustrated—wondering why your experience wasn’t translating to interview success—you now have your answer.

You’re not broken. The interview isn’t broken. There’s just a mismatch between how you’ve learned to work and what the interview format requires.

That mismatch is bridgeable. Thousands of experienced engineers have made this transition. They went from failing interviews despite strong production experience to confidently passing them at top companies.

The difference wasn’t that they became better engineers. The difference was that they learned to demonstrate their engineering ability in the artificial interview format.

You can make the same transition. Now you know what needs to change.

Frequently Asked Questions

Why do I keep failing system design interviews even though I’ve built production systems?

Production engineering and interview performance are different skills. At work, you have context, teammates, and time to iterate. Interviews test your ability to communicate structured thinking under artificial constraints. Your production experience is valuable, but it only helps if you can externalize your reasoning process explicitly. The failures aren’t questioning your abilities—they’re revealing that you need to practice a different mode of communication.

How much time should I spend on requirements clarification?

Spend 5-7 minutes clarifying requirements before drawing any architecture. This feels long, but it’s critical. Cover functional scope, scale expectations, consistency requirements, and constraints. Interviewers interpret rushed clarification as poor problem-framing skills. The engineers who fail most often are those who spend less than 2 minutes on requirements. The engineers who pass consistently spend 25-30% of the interview just ensuring they understand the problem correctly.

Should I design for massive scale in every interview?

No. Design for the stated requirements first, then explain how you’d scale. Over-engineering signals poor prioritization. Start with the simplest architecture that meets requirements, then say: “For initial scale of X users, this works. If we needed to handle Y users, here’s what would break first and how I’d address it.” This demonstrates judgment. Only design for massive scale immediately if the interviewer explicitly tells you to assume Google/Facebook-level traffic from the start.

What if I don’t know the answer to something the interviewer asks?

Say so, then demonstrate how you’d figure it out. Honesty about gaps combined with structured problem-solving impresses interviewers more than fake confidence. Example: “I haven’t designed exactly this before, but here’s how I’d approach it systematically…” Then walk through your reasoning. Interviewers test how you handle uncertainty because production engineering is full of unknowns. Your ability to make progress despite incomplete information matters more than having every answer memorized.

How do I know if I’m going into too much implementation detail?

If you’re discussing specific algorithms, code structures, or exact function signatures, you’ve gone too deep. System design interviews operate at the “boxes and arrows” level. Focus on components, data flow, and interactions between services. Only dive into implementation details if the interviewer explicitly asks you to. A good rule: If you wouldn’t show this level of detail in an architecture diagram you’d present to senior leadership, don’t include it in the interview unless requested.

Can I still pass if my final architecture isn’t perfect?

Yes. Interviewers care more about your reasoning process than your final answer. A good-enough architecture with clear trade-off analysis beats a perfect architecture with no explanation of how you got there. Many strong candidates pass while proposing architectures that have some flaws, because they demonstrated sound judgment and could articulate trade-offs. Conversely, candidates with technically perfect architectures fail when they can’t explain their decision-making process. Show your work matters more than getting the “right” answer.

Citations

Content Integrity Note

This guide was written with AI assistance and then edited, fact-checked, and aligned to expert-approved teaching standards by Andrew Williams . Andrew has over 10 years of experience coaching software developers through technical interviews at top-tier companies including FAANG and leading enterprise organizations. His background includes conducting 500+ mock system design interviews and helping engineers successfully transition into senior, staff, and principal roles. Technical content regarding distributed systems, architecture patterns, and interview evaluation criteria is sourced from industry-standard references including engineering blogs from Netflix, Uber, and Slack, cloud provider architecture documentation from AWS, Google Cloud, and Microsoft Azure, and authoritative texts on distributed systems design.